Abstract

Serial reversal-learning procedures are simple preparations that allow for a better understanding of how animals learn about environmental changes, including flexibly shifting responding to adapt to changing reinforcement contingencies. The present study examined serial reversal learning with humans by arranging both midsession and variable contingency reversals across two experiments. We also examined the effects of extinction by adding nonreinforced trials at the end of later sessions and provided the first evaluation of effects of win-stay/lose-shift versus counting strategies on accuracy and response latency of humans’ reversal-learning performance. In each experiment, responding tracked contingency reversals, primarily with participants using either win-stay/lose-shift or counting strategies. Introducing variable reversal points in the second experiment resulted in near-exclusive win-stay/lose-shift responding among participants and eliminated counting of trials. Each experiment also revealed an immediate shift from S2 to S1 after experiencing extinction during the initial test trial, indicating resurgence of the initial response through a win-stay/lose-shift response pattern. Therefore, the present study replicates and extends prior findings of a win-stay/lose shift response pattern in situations of greater uncertainty. These findings suggest that differences in environmental certainty induce qualitatively different decision-making strategies.

Similar content being viewed by others

Organisms face challenges to survival when environments change. Behavioral and evolutionary scientists have examined what is broadly called behavioral flexibility in the face of environmental changes under a wide range of species and conditions (see Lea et al., 2020). One general approach to examining behavioral flexibility assesses how behavior changes when responses that paid off at one time or in one setting do not pay off under other circumstances. In other words, how does operant behavior change when reinforcement contingencies change?

Reversal learning

Reversal learning is one approach to examining changes in operant behavior under changing reinforcement conditions (see Izquierdo et al., 2017, for a review). Tasks using a midsession reversal of reinforcement contingencies typically include two response alternatives and arrange a contingency reversal after a fixed number of trials (see Rayburn-Reeves & Cook, 2016; Zentall, 2020, for reviews). Responding to one location or stimulus (S1) is reinforced during trials comprising the first half of a session, and responding to a different location or stimulus (S2) is reinforced during trials comprising the second half of a session. With repeated exposure to the contingency change between S1 and S2 across sessions, responding reliably tracks those reinforcement contingencies across many species, including rats (e.g., Rayburn-Reeves et al., 2013), pigeons (e.g., R. G. Cook & Rosen, 2010), dogs (Laude et al., 2016), nonhuman primates (e.g., Rayburn-Reeves et al., 2017), and humans (e.g., McMillan & Spetch, 2019).

There are some apparent differences among species in how closely behavior tracks those contingencies before and after the midsession-contingency reversal. For example, counting the number of trials to reversal appears to be unique to humans (McMillan & Spetch, 2019; Rayburn-Reeves et al., 2011). However, humans, rats, parrots, and monkeys all have been reported to engage in a win-stay/lose-shift pattern of behavior—experiencing nonreinforcement of responding to S1 following the contingency reversal during the second half of sessions rapidly produces a shift to responding to S2 (Laschober et al., 2021; Rayburn-Reeves et al., 2011; Rayburn-Reeves et al., 2013; Rayburn-Reeves et al., 2017). These findings suggest individual response-outcome contingencies from trial to trial largely underly discriminations driving reversal performance between S1 and S2 in these species. In contrast, pigeons and dogs have been reported to make large numbers of anticipatory errors before contingency reversals and perseverative errors following contingency reversals (R. G. Cook & Rosen, 2010; Davison & Cowie, 2019; Laude et al., 2016). These findings suggest temporal cues from the start of sessions govern discrimination of contingency reversals in pigeons and dogs to a greater extent than rats or primates (see Zentall, 2020, for a review).

Nevertheless, several environmental conditions can influence the prevalence and pattern of anticipatory and perseverative errors during reversals across species. As examples, both rats and pigeons tend to make fewer errors during spatially defined discriminations than nonspatially defined visual discriminations (e.g., McMillan et al., 2014; McMillan & Roberts, 2012; Rayburn-Reeves et al., 2013), and error patterns between S1 and S2 change with differences in both reinforcer probability (e.g., Santos et al., 2019; Zentall, Andrews, et al., 2019a) and stimulus conditions (e.g., Rayburn-Reeves et al., 2017; Zentall, Peng, et al., 2019b). Such findings indicate that differences in error patterns are dependent on the prevailing stimulus and reinforcement conditions (see Zentall, 2020, for a review). Therefore, with some exceptions, such as counting the number of trials to reversal, differences in reversal learning among species and behavioral flexibility more generally might largely reflect quantitative rather than qualitative differences (see McMillan & Spetch, 2019). One goal of the present research was to examine the extent to which conditions of uncertainty influenced patterns of human decision-making during contingency reversals—in particular, patterns of counting trials versus a win-stay/lose shift strategy.

Humans’ use of a win-stay/lose-shift strategy has been documented across a wide variety of repeated decision scenarios that introduce conditions of uncertainty, including the Iowa gambling task (e.g., Cassotti et al., 2011; Worthy et al., 2013), the Prisoner’s Dilemma (e.g., Wedekind & Milinski, 1996), causal learning tasks (e.g., Bonawitz et al., 2014), rock paper scissors (e.g., Zhang et al., 2021), stock market predictions (Gutiérrez-Roig et al., 2016), and formation changes in football (Tamura & Masuda, 2015). Moreover, prior research on human performance during repeated decision tasks has shown that quantitative models assuming a win-stay/lose-shift strategy outperform traditional reinforcement learning models (e.g., Worthy et al., 2012; Worthy & Maddox, 2012). These findings suggest that win-stay/lose-shift is a pervasive strategy under conditions of uncertainty.

Resurgence

A related set of findings demonstrating behavioral flexibility during changes in reinforcement contingencies is an effect referred to as resurgence. Resurgence has been studied primarily as a preclinical model of relapse in which only a single contingency reversal from S1 to S2 is later followed by extinguishing the most recently reinforced response to S2 (see Lattal et al., 2017; Shahan & Craig, 2017; Wathen & Podlesnik, 2018, for reviews). The increase in S1 responding upon extinguishing S2 responding demonstrates behavioral flexibility in which a previously reinforced behavior returns despite no longer being reinforced. One goal of the present research was to examine the extent to which conditions of uncertainty influence patterns of decision-making when introducing extinction of S1 and S2 responses—in particular, patterns of counting trials versus a win-stay/lose shift strategy.

Resurgence is relevant to understanding behavioral flexibility because the return of previously successful responses is relevant to problem-solving, creativity, foraging strategies, and behavioral variation (see Shahan & Chase, 2002). For example, previously learned approaches to solving complex math problems might resurge when more recently learned approaches are ineffective (e.g., C. L. Williams & St. Peter, 2020). As with midsession reversal learning, resurgence has been demonstrated under laboratory conditions in a range of species, including zebrafish (Kuroda et al., 2017a, 2017b), Siamese fighting fish (da Silva et al., 2014), pigeons (e.g., Liddon et al., 2017), mice (Craig et al., 2020), rats (e.g., Podlesnik et al., 2019), monkeys (Mulick et al., 1976), and humans with and without intellectual disabilities (e.g., Ho et al., 2018; Podlesnik et al., 2020; Shvarts et al., 2020). Resurgence of problem behaviors also has been observed to be prevalent clinically (e.g., Briggs et al., 2018; Muething et al., 2021). In contrast to midsession reversal learning, a comparative approach to examining resurgence across species has not been employed systematically. Nevertheless, the contingency changes with both reversal learning and resurgence procedures offer an opportunity to examine counting and win-stay/lose-shift response patterns during conditions of reinforcement uncertainty. Thus, the present experiments combined reversal learning and resurgence procedures for the first time.

Present experiments

The present experiments examined S1–S2 reversal learning with humans across 10 consecutive blocks of trials -- hereafter referred to as "sessions" -- arranging either a fixed (Experiment 1) or variable (Experiment 2) reversal point. We expected responding to be more precisely controlled by the contingencies with greater exposure, with few anticipatory or perseverative errors toward the end of those sessions (McMillan & Spetch, 2019; Rayburn-Reeves et al., 2011; see also R. G. Cook & Rosen, 2010). During Sessions 11–15, we extended the number of trials per session following S1–S2 contingency reversals to assess how extinguishing responses to both S1 and S2 affected response patterns (cf. Bai et al., 2017). We expected that experiencing extinction of responding to S2 during those additional trials would initially yield an increase in responding to S1, analogous to a resurgence effect and consistent with a win-stay/lose-shift pattern of behavior. Therefore, the present experiments assessed patterns of reversal learning and resurgence under lower (Experiment 1) and higher (Experiment 2) levels of (un)certainty regarding the changing of reinforcement contingencies. This research was designed to answer the following research questions:

-

1.

To what degree did performance change across trials with repeated exposure to contingency reversals?

-

2.

To what degree did performance change when removing reinforcement for both responses during extinction testing?

-

3.

To what degree do different response patterns reflect differences in decision-making? Specifically, how do contingency reversals and extinction induce counting versus win-stay/lose-shift strategies under conditions of lower and higher levels of (un)certainty?

Experiment 1

Experiment 1 systematically replicated Experiment 4 of Rayburn-Reeves et al. (2011) with a touchscreen computer task. During the first 10 sessions, we arranged 24 trials per session in which the reinforcement and extinction contingencies reversed predictably after Trial 12. We arranged two buttons differing in appearance, consistent with previous research on reversal learning of visual discriminations (McMillan & Spetch, 2019) and resurgence (Podlesnik et al., 2020). Therefore, the present experiment examined how contingency changes and extinction affected response patterns and decision-making strategies under relatively certain conditions.

Methods

Participants and apparatus

We recruited a total of 20 undergraduate students from the departmental research pool (SONA) at Auburn University to receive course credit and the opportunity to earn a $25 Amazon gift card. Data from all but two participants were included in the subsequent analyses (exclusion criteria described below). Participants were 18 to 22 years old (M = 19.4, SD = 1.3), and 13 were female (65%). Participants completed the experiment on a 17-in. Angel POS touchscreen monitor (1,280 × 1,024 resolution) connected to a desktop computer running Windows 10. The task was programmed using Visual Basic 2015.

Procedure

For the duration of the experiment, participants sat alone in a 6.1 square-meter room without a phone or watch. Written instructions were presented on the touchscreen monitor. Instructions read:

“After pressing the PROCEED button, you will play a game on a computer. When ready, press the PLAY button. A new page will appear, and you will see buttons. Touching buttons could earn you points. A picture will appear if you earn a point. Then, the session will be resumed shortly. Earn as many points as you can. More points increase the likelihood of receiving a $25 Amazon gift card. To play this game, we ask you not to count your responses or points.”

Instructions to avoid counting have been reported in previous research to prevent biases in responding produced by counting (e.g., Grondin et al., 2004; Rayburn-Reeves et al., 2011).

Pressing “PROCEED” introduced a “PLAY” button, which initiated the first session. Figure 1 shows objects presented during the task. Participants completed 15 sessions. The first 10 sessions consisted of 24 trials and the last five sessions consisted of 36 trials. During each trial, a workspace consisting of a beach background with a height of 700 pixels and a width of 900 pixels appeared at the center of the monitor. The rest of the space on the monitor remained black throughout the session. Though invisible, the workspace was divided into two halves (left and right) and a button appeared at the center of each half at the onset of each trial. One of the buttons was a blue square and the other was an orange square (100 × 100 pixels each). A single symbol from playing cards was superimposed on both the blue square and orange circle buttons (e.g., red heart, black club), with symbol/button combinations counterbalanced across participants. We included these symbols to increase stimulus disparity, as greater disparity between response options tends to produce greater control by consequences (e.g., Gallagher & Alsop, 2001; Godfrey & Davison, 1998). Each button was presented equally often on the left and right sides of the screen, but neither appeared on the same side for more than three trials in a row. Within each half of the workspace, buttons randomly moved 25 pixels at 1.5-s intervals within a restricted area (200 pixels × 200 pixels).

Task interface. Note. Objects presented on the screen included a single workspace (700-px × 900-px squares) with a beach image in the background. Two buttons (90-px × 90-px squares) moved on the right or left side of the screen. Buttons were a blue square and an orange square with superimposed heart and club symbols. (Color figure online)

For the first 12 trials of each session (hereafter Pre-Reversal phase), pressing the S1 button displayed a yellow star with “+1” for 1 s according to a fixed ratio (FR) 1 reinforcement schedule. Pressing the S2 button resulted in a brief blackout (the entire screen turning black for 1 s). For the next 12 trials (Trials 13–24; hereafter Post-Reversal phase) of each session, the contingencies were reversed between presses to the S1 and S2 buttons. We will refer to this portion of the experiment (i.e., Trials 1–24 across all 15 sessions) as the Contingency Reversal. Sessions 11–15 included an additional 12 trials (Trials 25–36) in which all responses resulted in a 1-s blackout and no reinforcer deliveries occurred. We will refer to these additional trials during Sessions 11–15 as the Extinction Test.

After completion of all but the last session, the buttons disappeared, and a rotating hourglass appeared on a white background for 5 s. Following this, a “PLAY” button reappeared and pressing it initiated the next session. After the 15th session, participants completed a postexperiment questionnaire which asked about demographic details (age, sex; see Supplemental Materials) and how they made decisions during the task.

Data analysis

Data screening

Data sets were excluded from analyses if (1) at least 50% of responses were allocated to the S2 button for five consecutive sessions during Trials 1 through 12 or (2) at least 50% of responses were allocated to the S1 button for five consecutive sessions during Trials 13 through 24. Similar exclusion criteria were implemented by McMillan and Spetch (2019) to exclude participants who failed to discriminate the contingencies for lack of attending to the task. Excluded data sets are presented with the Supplemental Materials.

Analytical strategy

We used a mixed-effects modeling approach (DeHart & Kaplan, 2019) to evaluate how various factors (i.e., Group, Session, Phase, Trial) influenced the likelihood of selecting S1. Specifically, a multilevel logistic model was used to evaluate trial-by-trial choice for S1 (1) and S2 (0). This approach was suited to this task and this question because the multilevel approach both accounts for intercorrelations within (i.e., Subject) and between (i.e., Group) the data. Further, this approach preserves individual-level variability and supports further review of how reversal learning and resurgence occur for individuals.

All statistical procedures were performed using the R Statistical Program (R Core Team, 2021). Multilevel modeling was performed using the lme method included in the lme4 package (Bates et al., 2015). Although the full data could be evaluated together, individual choices were evaluated separately for the Contingency Reversal (Sessions 1–15, Trials 1–24) and Extinction Test portions of each experiment (Sessions 11–15, Trials 25–36). This was the more pragmatic choice because the quantities of data differed and because research questions were specific to respective portions of the data. Bonferroni corrections (.05/2 = .025) were applied to address issues with repeated comparisons. Random effect structures were evaluated using the second-order Akaike information criterion (AICc) provided in the MuMIn R package (Bartoń, 2020), and the associated fixed effects were subsequently evaluated using likelihood-ratio tests.

Results

Descriptive analysis

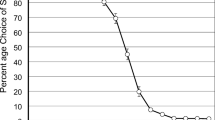

The left panel of Fig. 2 shows the percentage of S1 choices as means of sessions 1-5, 6-10, and 11-15 (hereafter session blocks). A lower percentage of participants chose S1 during the first trial (M = 80.4%, SEM = 3.1) compared with subsequent Pre-Reversal trials across all three session blocks (M = 95.9%, SEM = 0.4). There were slight decreases in percentages suggesting anticipatory errors in the last trial before the midsession contingency reversals in some sessions across all three session blocks (see the Supplemental Materials). Following the reversal, the percentage of S1 choices on Trial 13 decreased across session blocks, with fewer errors observed on this trial in later session blocks (Trials 1–5: M = 92.2%, SEM = 2.9; Trials 11–15: M = 64.4%, SEM = 9.5). Percentage of S1 choices decreased rapidly across the first three Post-Reversal trials and reached low levels across session blocks (Trial 15: M = 4.4%, SEM = 1.3). The right panel of Fig. 2 shows the percentage of S1 choices across individual Sessions 11-15 during the last four trials before the Extinction Test and the first four trials during the Extinction Test. The right panel shows that there were no indications of anticipatory errors before the Extinction Test.

Percentage of S1 choices across trials and sessions in Experiment 1. Note. This figure shows the percentage of participants choosing S1 (1) as a mean across 5-session blocks (left panel) and (2) in individual Sessions 11–15 in the trials immediately preceding and following the onset of extinction (right panel). Solid lines represent the onset of contingency changes. + = FR1 schedule of reinforcement; − = extinction. Error bars represent standard errors of the mean. (Color figure online)

Responding during the Extinction Test

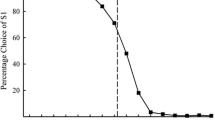

The percentage of S1 choices in Session 11 increased from the first to the second trial of the Extinction Test before decreasing on the subsequent trial and oscillating near 50% thereafter (M = 51.4%, SEM = 1.7). Figure 3 shows the pattern of responding across the first three trials of the Extinction-Test sessions. The percentage of participants choosing S1 was low, high, and low across Trials 25, 26, and 27, with this general pattern becoming less pronounced across sessions.

Percentage of S1 choices in the first three extinction-test trials of Experiment 1. Note. Black, gray, and white data points show the percentage of participants choosing S1 in the first three trials (25, 26, and 27) of the Extinction Test, respectively, across Sessions 11–15.

Figure 4 shows the mean number of clicks on areas of the screen other than the response buttons (hereafter workspace responses) per 12 trials across Sessions 11-15. The figure shows that workspace responses occurred more often during the Extinction-Test trials (25–36; M = 1.1, SEM = 0.2) relative to trials preceding the Extinction Test (13–24; M = 0.4, SEM = 0.1). Workspace responses remained low across these sessions during Trials 13–24.

Mean number of workspace responses before and during the extinction test in Experiment 1. Note. This figure shows the mean number of clicks on the background image across all participants in Sessions 11–15 both prior to (open circles) and during (filled circles) the Extinction Test. Clicks on the background image included clicks anywhere on the screen other than the two response buttons.

Response patterns

Figure 5 shows the percentage of participants adopting a win-stay/lose-shift, counting, or some other undefined response pattern during the Contingency Reversal and Extinction Test across sessions. The figure also shows the reported percentage of win-stay/lose-shift (dotted line) and counting patterns in the post-experiment survey (dashed line). We coded a win-stay/lose-shift response pattern when two S1 responses occurred immediately before and after the onset of the contingency reversal or extinction and the subsequent response was an S2 response. We coded a counting pattern when there were two S1 responses immediately before the onset of a contingency reversal or extinction and an S2 response after the change. We coded other patterns as those not meeting the definition of a win-stay/lose-shift or counting pattern. Figure 5 shows the prevalence of win-stay/lose-shift response patterns increased from 28% to 83%, while the prevalence of other response patterns decreased from 72% to 17% across Sessions 1–5. Counting remained at zero levels across Sessions 1-5. All response patterns remained stable across Sessions 6–10 until the prevalence of win-stay/lose-shift response patterns decreased from 72% to 44% and the prevalence of counting increased from 11% to 33% in Session 10. Those response patterns largely held steady throughout Sessions 11–15 during the Contingency Reversal (win-stay/lose-shift: M = 51.1%; counting: M = 36.7%). Initiating the Extinction Test produced an (1) increase and subsequent decrease in win-stay/lose-shift response patterns, (2) an immediate decrease in counting, and (3) an increase in other response patterns.

Percentage of win-stay/lose-shift and counting response patterns in Experiment 1. Note. This figure shows the percentage of response types coded as Win-Stay/Lose-Shift (W-S/L-S), Counting (Count), or Other across individual sessions in Experiment 1. Ext = Extinction Test. W-S/L-S: Two S1 responses occurred immediately before and after the onset of a contingency reversal or extinction and the subsequent response was S2. Count: Two S1 responses occurred immediately before the onset of a contingency reversal or extinction and the S2 response occurred immediately after the change. Other: Patterns did not meet either definition. The dashed line represents the percentage of participants reporting counting in the post-experiment survey; the dotted line represents the percentage of participants reporting a win-stay/lose-shift strategy

Error patterns

Figure 6 shows the mean number of errors associated with different response patterns (counting, win-stay/lose-shift, other). The figure shows that counting resulted in the lowest number of errors (M = 0.2), while response patterns other than counting or win-stay/lose-shift resulted in the highest number of errors in each session block (M = 3.2).

Response latencies

The left panel of Fig. 7 shows differences in mean latencies to choices of S1 or S2 in the first three trials following the contingency reversal (Trials 13-15) relative to the trial immediately preceding the reversal (Trial 12) across Sessions 1-5 for participants engaging in counting, win-stay/lose-shift, or other response patterns. For participants engaging in win-stay/lose-shift response patterns, the figure shows little increase in latencies to choices of S1 or S2 from the last Pre-Reversal trial (12) to the first three Post-Reversal trials (13–15) in Sessions 1–5 (M = +8.9 ms). However, participants engaging in other response patterns demonstrated increases in latencies to each choice in Trials 13–14 relative to Trial 12 (M = +427.9 ms). The right panel of Fig. 7 shows differences in mean latencies to choices of S1 or S2 from the last Post-Reversal trial (Trial 24) to the first three Extinction-Test trials (Trials 25-27) across Sessions 11-15. The right panel shows that counting response patterns resulted in the greatest increases in response latency from Trial 24 to Trial 25 (M = 617.7 ms), while win-stay/lose-shift patterns resulted in more gradual increases in response latency across the first two Extinction-Test trials (25–26; Trial 25: M = +28.5 ms, Trial 26: M = 777.3 ms).

Differences in response latency from the last pre-change trial in Experiment 1. Note. ms = milliseconds. The left panel shows mean difference in reaction time from the last Pre-Reversal trial (Trial 12) across Sessions 1–5. The right panel shows mean difference in reaction time from the last Post-Reversal trial (Trial 24) across Sessions 11–15

Statistical analysis

Contingency reversal

Table 1 shows the results from the Contingency Reversal portion of the experiment. Results indicated a significant association between Trial number and the response (β = −0.30, p < .001), whereby increasing trials was associated with decreased odds of S1 versus S2 (factor of 0.74). Similarly, there was a significant association between the type of contingency and the response (β [Pre-Reversal] = 2.87, p < .001), whereby the odds of selecting S1 over S2 increased by a factor of 17.65. These findings suggest that responding tracked reinforcement contingencies.

Extinction test

Table 1 also shows the results from the Extinction Test portion of the experiment. The best-fitting model indicated a significant association between Trial and the response (β = 0.07, p < .001), whereby the odds of selecting S1 over S2 increased by a factor of 1.08 across trials within sessions.

Discussion

Overall, choices between S1 and S2 were sensitive to both the midsession-reversal and extinction contingencies. We observed anticipatory errors by some participants before contingency reversals, consistent with McMillan and Spetch (2019) and Rayburn-Reeves et al. (2011). We also observed decreases in S1 choices during the first trial following contingency reversals, especially during later session blocks. In other words, some participants switched from S1 to S2 before experiencing the blackout for an incorrect response within sessions. This finding is consistent with Rayburn-Reeves et al. and indicative of counting. Therefore, the instruction not to count trials (Grondin et al., 2004) was not entirely effective in the present experiment or Rayburn-Reeves et al. (2011). Performance reflected experience with contingency changes and, for some participants, the additional influence of counting trials.

We also examined responding when extinguishing responses to both S1 and S2. Consistent with previous studies of resurgence, experiencing the extinction contingency for S2 produced an increase in S1 responding (e.g., Kuroda et al., 2017a; Podlesnik et al., 2020; Robinson & Kelley, 2020). That is, choices in extinction initially reflected previous experience with contingency reversals. The odds of choosing S1 increased across trials (although this was a small effect; see Table 2). In line with these findings, target responding during resurgence tests sometimes increases immediately and then decreases (e.g., Sweeney & Shahan, 2013). In other cases, resurgence initially occurs at a low rate before increasing (e.g., Doughty et al., 2007; Podlesnik & Shahan, 2009, 2010). Nevertheless, the present findings demonstrated that the conditions producing the resurgence effect in a majority of participants were consistent with the win-stay/lose-shift response pattern observed during contingency reversals. In other words, both types of contingency changes similarly induced abrupt changes in responding to the other option.

Experiment 2

Despite providing instructions not to count (see also Rayburn-Reeves et al., 2011), counting the number of trials to a contingency reversal became relatively common among participants during later sessions in Experiment 1. One approach that appears to minimize control by trial number with human participants is arranging contingency reversals after varying and unpredictable numbers of trials across successive sessions (see also Rayburn-Reeves et al., 2011). In Experiment 2, we replicated the procedures from Experiment 1, but varied reversals across sessions. Eliminating counting from performance during contingency reversals would facilitate the examination of whether a common win-stay/lose-shift response pattern could account for performance both during contingency reversals and subsequent extinction tests. Therefore, the present experiment examined how contingency changes and extinction affected response patterns and decision-making strategies under relatively uncertain conditions.

Methods

Participants and apparatus

Eighteen undergraduate students recruited from a psychology research pool (SONA) at Auburn University participated to receive course credit and the opportunity to earn a $25 Amazon gift card. No participants previously completed Experiment 1. Participants were 18 to 22 years old (M = 19.7, SD = 1.4), and 13 were female (72%). One participant reported red–green colorblindness, but we included this participant because the button stimuli were differentiated by both symbols and colors.

Procedure

The experimental apparatus and procedure were identical to Experiment 1, with the exception that reinforcement and extinction contingency reversals between S1 and S2 occurred variably across trials (on Trials 5, 9, 13, 17, or 21), with each reversal point occurring equally often across the three 5-session blocks. We arranged an equal number of reinforced trials for S1 and S2 within each session block.

Data analysis

The analytical approach for Experiment 2 was identical to that of Experiment 1, with the exception that we included only the Phase and Reversal Point factors in the model (i.e., not Trial or Time). This is because Time (i.e., # of sessions in Phase) was not constant across levels of Reversal Point. We used emmeans (Lenth et al., 2021) with the best-fitting model to perform pairwise comparisons across different levels of Reversal Point. Exclusion criteria were identical to Experiment 1.

Results

Descriptive analysis

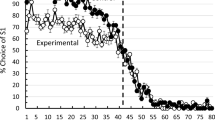

Figure 8 shows the percentage of S1 choices as means of trial blocks in the trials immediately preceding and following contingency reversals (left panel) or in individual sessions across trials immediately preceding and following the onset of extinction (right panel). The majority of participants chose S1 during the four trials preceding contingency reversals across all session blocks (M = 94.6%, SEM = 0.7). As expected, given the variable reversal point, there was little indication of anticipatory errors in the last trial before contingency reversals across all three session blocks (see Supplemental Materials; cf. Experiment 1). Following the reversal, the percentage of S1 choices on Trial 13 (labeled 1 in the left panel of Fig. 8) remained high across all blocks (M = 97.4%, SEM = 0.9) before decreasing on the subsequent trial (M = 20%, SEM = 2.9), indicating a win-stay/lose-shift strategy. The right panel of Fig. 8 shows that S1 choices increased in the last trials of the Post-Reversal phase during Session 15 from 6% to 11%, indicating few but greater than zero anticipatory errors before the Extinction Test.

Percentage of S1 choices before and after contingency changes in Experiment 2. Note. The left panel shows the percentage of participants choosing S1 as a mean across 5-session blocks in trials immediately preceding and following the onset of contingency reversals. The right panel shows the percentage of participants choosing S1 in individual sessions (11–15) immediately preceding and following the onset of extinction. Solid lines represent the onset of extinction. + = FR1 schedule of reinforcement; − = extinction. Error bars represent standard errors of the mean

Responding during the extinction test

As in Experiment 1, the right panel of Fig. 8 shows that the percentage of S1 choices in Session 11 increased from the first to the second trial of the Extinction Test before decreasing on the subsequent trial and oscillating near 50% thereafter (M = 50.3%, SEM = 1.7). Responding continued to oscillate near 50% for the remainder of Extinction-Test trials (see Supplemental Materials). Also consistent with Experiment 1, Fig. 9 shows that the percentage of participants choosing S1 was low, high, and low across Trials 25, 26, and 27, with this general pattern becoming less pronounced across sessions.

Percentage of S1 choices in the first three extinction-test trials of Experiment 2. Note. Black, gray, and white data points show the percentage of participants choosing S1 in the first three trials (25, 26, and 27) of the Extinction Test, respectively, across Sessions 11–15

As in Experiment 1, Fig. 10 shows that workspace responses occurred more often during the Extinction-Test trials (25–36; M = 1.4, SEM = 0.2) relative to preceding trials (13–24; M = 0.5, SEM = 0.1). Workspace responses remained relatively low across sessions in Trials 13–24.

Mean number of workspace responses before and during the extinction test in Experiment 2. Note. This figure shows the mean number of clicks on the background image across all participants in Sessions 11–15 both prior to (open circles) and during (filled circles) the Extinction Test. Clicks on the background image included clicks anywhere on the screen other than the two response buttons

Response patterns

Figure 11 shows that the prevalence of win-stay/lose-shift response patterns increased from 39% to 94%, while the prevalence of other response patterns decreased from 61% to 6% across the first five sessions, as in Experiment 1. Unlike Experiment 1, (1) response patterns meeting the definition of counting remained at near-zero levels across all sessions, and (2) the percentage of win-stay/lose-shift response patterns remained relatively high across Sessions 6–15 of the Contingency Reversal (M = 84.4%). Consistent with Experiment 1, initiating the Extinction Test produced an immediate increase and then decrease in win-stay/lose-shift responses across sessions and an increase in other response patterns.

Percentage of win-stay/lose-shift and counting response patterns in Experiment 2. Note. This figure shows the percentage of response types coded as Win-Stay/Lose-Shift (W-S/L-S), Counting (Count), or Other across individual sessions in Experiment 2. Ext = Extinction Test. W-S/L-S: Two S1 responses occurred immediately before and after the onset of a contingency reversal or extinction and the subsequent response was S2. Count: Two S1 responses occurred immediately before the onset of a contingency reversal or extinction and the S2 response occurred immediately after the change. Other: Patterns did not meet either definition. The dashed line represents the percentage of participants reporting counting in the post-experiment survey; the dotted line represents the percentage of participants reporting a win-stay/lose-shift strategy

Error patterns

Figure 12 shows that win-stay/lose-shift response patterns resulted in fewer errors (M = 1.7) compared with other response patterns across all session blocks (M = 3.2), as in Experiment 1.

Response latencies

For participants engaging in win-stay/lose-shift response patterns, the left panel of Fig. 13 shows no increase in latencies to choices of S1 or S2 from the last Pre-Reversal trial (12) to the first two Post-Reversal trials (13–14) in Sessions 1–5 (M = −78.0 ms). However, latencies to each choice increased slightly in Trial 15 relative to Trial 12 for those participants (M = +201.9 ms). For participants engaging in other response patterns, latencies decreased slightly in Trials 13–15 relative to Trial 12 (M = −151.25 ms).

Differences in response latency from the last pre-change trial in Experiment 2. Note. ms = milliseconds. The left panels show mean difference in reaction time from the last Pre-Reversal Trial (Trial 12) across Sessions 1–5. The right panels show mean difference in reaction time from the last Post-Reversal Trial (Trial 24) across Sessions 11–15

The right panel shows increases in mean latencies to choices of S1 or S2 from the last Post-Reversal trial (Trial 24) to the first three Extinction-Test trials (Trials 25–27) in Sessions 11–15. Win-stay/lose-shift and other response patterns both resulted in a gradual increase in response latency from the first Extinction-Test trial (25; win-stay/lose-shift: M = +5.4 ms; other: M = −121.1 ms) to the second Extinction-Test trial (26; win-stay/lose-shift: M = +929.7 ms; other: M = +1.1 s) relative to Trial 24.

Statistical analysis

Contingency reversal

Table 2 shows the results from the best-fitting model for the Contingency Reversal portion of the experiment. Results indicated a significant association between Phase and the response (β = 4.42, p < .001), whereby the odds of selecting S1 over S2 was higher in the Pre-Reversal phase compared with the Post-Reversal phase (factor of 82.86). Similarly, there was a significant association between Reversal Point (Trial 13, 17, 21) and the response (βs > 0.45, ps < .001), whereby odds of selecting S1 over S2 were greater as the reversal was arranged on later trials. Pairwise comparisons across Reversal Points indicated that the odds of selecting S1 over S2 generally were greater on Trial 24 when the reversal occurred on later versus earlier trials (see Table 3). Overall, these findings were consistent with Experiment 1 and suggest that responding tracked the reinforcement contingencies.

Extinction test

Table 2 also shows the results from the Extinction Test portion of the experiment. The best-fitting model indicated a significant association between Trial and the response (β = 0.06, p < .002), whereby the odds of selecting S1 over S2 increased by a factor of 1.06 across trials within sessions. Thus, consistent with the results of Experiment 1, the odds of selecting S1 in Experiment 2 increased across trials during testing.

Discussion

As in Experiment 1, choices between S1 and S2 were sensitive to both the reversal and extinction contingencies. Our findings in the present experiment were also consistent with Rayburn-Reeves et al. (2011) in that introducing contingency reversals after a variable number of trials across sessions was effective in reducing control by trial number and thereby eliminated counting. Instead, performance during the reversals in the current experiment primarily reflected a win-stay/lose-shift strategy compared with Experiment 1, arranging midsession reversals.

Also consistent with Experiment 1, experiencing the extinction contingency for S2 produced an immediate increase in S1 responding in most participants, reflective of previous experiences with contingency reversals across prior sessions and consistent with a win-stay/lose-shift response pattern. Across subsequent extinction trials within each session, the probability of selecting S1 increased in both experiments. These findings are consistent with initial increases observed in other studies arranging more traditional procedures to examine resurgence (e.g., Doughty et al., 2007; Podlesnik & Kelley, 2014). Therefore, performance in studies of repeated midsession reversal learning and resurgence demonstrates a type of behavioral flexibility stemming from extinction inducing previously effective response patterns.

General discussion

The present series of experiments examined reversal learning in humans with either a fixed- or variable-reversal point. In the last five sessions of each experiment, we also examined resurgence by arranging extinction of both responses when extending sessions with additional nonreinforced trials, a novel extension of the standard reversal-learning paradigm. When contingency reversals occurred on a predictable trial midway through each session (i.e., low uncertainty regarding contingency changes), a majority of participants engaged in a win-stay/lose-shift strategy during early sessions, but we observed an increase in the prevalence of (1) counting and (2) anticipatory errors before reversals (Experiment 1). In contrast, arranging the reversal after a variable number of trials across sessions (i.e., high uncertainty regarding contingency changes) resulted in almost no counting and a high prevalence of win-stay/lose-shift response patterns (Experiment 2). We also found that extinction of S2 generally produced an immediate return to S1 across all three experiments, indicative of a resurgence effect via a win-stay/lose-shift response pattern. These response patterns suggest increased prevalence of control by local contingencies (win-stay/lose-shift) over more global contingencies (counting) under conditions of uncertainty introduced through (1) contingency reversals after a variable number of trials and (2) extinction.

Humans have been shown to use a win-stay/lose-shift strategy across a wide variety of repeated decision scenarios introducing conditions of uncertainty (e.g., Cassotti et al., 2011; Zhang et al., 2021). A win-stay/lose-shift strategy is highly effective under some conditions of uncertainty (e.g., Nowak & Sigmund, 1993), at least within tasks including frequent feedback/reinforcement (e.g., B. A. Williams, 1972; Experiment 2). Moreover, win-stay/lose-shift response patterns likely reflect discriminative properties of consequences (Cowie, 2020; Simon et al., 2020). That is, the presence or absence of reinforcement for one response serves as a signal for the subsequent availability of reinforcement across response options.

That different strategies (i.e., counting in Experiment 1 and win-stay/lose-shift in Experiment 2) resulted in the fewest errors during contingency reversals (see Figs. 6 and 12) suggests that effectively adapting to environmental changes requires flexibly shifting between decision-making tactics. For a related example with birds, Kloskowski (2021) found that under some conditions, exclusive use of a win-stay/lose-shift strategy was suboptimal for selecting habitats with greater food availability (see also Otto et al., 2011). In line with this idea, the present findings indicate that win-stay/lose-shift and counting strategies were more or less optimal depending upon the level of uncertainty regarding contingency changes. That is, counting was the optimal strategy under conditions of low uncertainty (midsession reversal, Experiment 1), whereas win-stay/lose-shift was the optimal strategy under conditions of high uncertainty (variable reversal, Experiment 2).

Given differences in the number of errors resulting from counting and win-stay/lose shift strategies, we also evaluated whether these strategies produced different effects on latencies to choosing S1 or S2 (see Figs. 7 and 13). Response latencies could reflect processes underlying choice that cannot be understood by observing selections of S1 or S2 alone (e.g., Konovalov & Krajbich, 2019). For example, prior research has shown that humans’ reaction times increase as a function of uncertainty in psychophysical tasks (see Bonnet et al., 2008, for a discussion). In the present study, we found that latencies remained relatively constant before and after contingency reversals across all sessions and strategies (see also Rayburn-Reeves & Cook, 2016; Smith et al., 2018, for similar findings with pigeons). During Sessions 11–15, in contrast, we showed for the first time that mean latencies to choices of S1 or S2 increased from the last Post-Reversal trial (Trial 24) to the first two Extinction-Test trials (Trials 25–26; see Mellgren & Elsmore, 1991, for related findings with rats). Thus, counting and win-stay/lose-shift produced different effects on response latencies during extinction, but not during contingency reversals.

Research in behavioral economics suggests that latencies can reflect differences in decision strategies (Kahneman, 2013). In line with this idea, counting in the present study resulted in the greatest increases in response latency from Trial 24 to Trial 25 in Experiment 1 as a result of encountering the added trials. In contrast, win-stay/lose-shift resulted in a more gradual increase in response latency across the first two Extinction-Test trials (25, 26) upon experiencing the extinction contingencies for S1 and S2. Thus, latencies peaked when experiencing the extinction contingency, although this occurred at different time points among participants engaging in different response patterns. Specifically, participants using a counting strategy typically switched to the opposite response (and experienced the extinction contingency) one trial earlier than participants using a win-stay/lose-shift strategy. Our findings, therefore, suggest that latencies peak with uncertainty regarding reinforcement contingencies (see Konovalov & Krajbich, 2019, for related findings).

As with response latencies, we also observed differences in response variability during contingency reversals versus extinction. For example, participants repeatedly switched between responses only during the Extinction Test, and workspace responses increased from Post-Reversal to Extinction-Test trials (see Figs. 4 and 10). These findings are similar to research in other experimental paradigms showing that extinction produces increases in variability in novel dimensions of previously reinforced behavior. For example, Antonitis (1951) reinforced rats’ nose-poke responses at a single location but observed during extinction that responses were allocated at increasing distances from the previously reinforced location (see also Eckerman & Lanson, 1969; Stokes, 1995; see Neuringer & Jensen, 2013, for a review). A related point comes from consumer-distribution models in the behavioral ecology literature. These models predict that animals experiencing a worsening of conditions (e.g., lower food quality or availability) will shift to exploring the surrounding environment as opposed to exploiting the impoverished food source (i.e., incentive contrast; see Flaherty, 1999, for a review). In contrast, animals experiencing only the less favorable condition without a shift will consume the lower quality of food throughout (Bernstein et al., 1988). Regarding the present findings, the removal of all reinforcers could have similarly produced an increase in exploratory behavior. The exploratory behavior appeared as resurgence of S1 but also could induce increases in other responses that never resulted in reinforcement under the current experimental conditions (i.e., workspace responses; see Bolívar et al., 2017; Cox et al., 2019; Ho et al., 2018, for similar findings). To further evaluate whether changes in responding during extinction are best characterized as extinction-induced variability or resurgence of a previously reinforced response specifically, future studies could include a control response with no reinforcement history across all phases of the experiment (e.g., Podlesnik et al., 2006; see Lattal & Oliver, 2020, for a relevant discussion).

In summary, we examined humans’ response patterns under changing reinforcement conditions by arranging a simple reversal learning task with additional nonreinforced trials during some sessions to evaluate resurgence. Consistent with previous research, participants adopted a win-stay/lose-shift response pattern under conditions of greater uncertainty, such as when contingency reversals occurred on unpredictable trials and during extinction. We extended prior research by demonstrating (1) that the use of a win-stay/lose-shift strategy under conditions of greater uncertainty led to more optimal performance (i.e., greater accuracy during contingency reversals) and (2) that counting and win-stay/lose-shift strategies produced different effects on response latencies during extinction but not during contingency reversals. The latter finding is in line with behavioral economic research and suggests that latency to choosing S1 or S2 increases during ambiguous reinforcement conditions. These findings also suggest that differences in environmental (un)certainty appear to induce qualitatively different decision-making strategies (i.e., counting versus win-stay/lose-shift; cf. McMillan & Spetch, 2019). Further study of response patterns during repeated decision tasks could contribute to our understanding of behavioral flexibility and in humans’ and nonhumans’ organization and selection of behavior under conditions of uncertainty.

References

Antonitis, J. J. (1951). Response variability in the white rat during conditioning, extinction, and reconditioning. Journal of Experimental Psychology, 42, 273–281. https://doi.org/10.1307/h0060407

Bai, J. Y. H., Cowie, S., & Podlesnik, C. A. (2017). Quantitative analysis of local-level resurgence. Learning & Behavior, 45, 76–88. https://doi.org/10.3758/s13420-016-0242-1

Bartoń, K. (2020). MuMIn: Multi-Model Inference. R package version 1.43.17. https://CRAN.R-project.org/package=MuMIn

Bates, D., Mächler, M., Bolker, B. M., & Walker, S. C. (2015). Fitting linear mixed-effects models using lme4. Journal of Statistical Software, 67(1), 1–48. https://doi.org/10.18637/jss.v067.i01

Bernstein, C., Kacelnik, A., & Krebs, J. R. (1988). Individual decisions and the distribution of predators in a patchy environment. Journal of Animal Ecology, 57, 1007–1026. https://doi.org/10.2307/5455

Bolívar, H. A., Cox, D. J., Barlow, M. A., & Dallery, J. (2017). Evaluating resurgence procedures in a human operant laboratory. Behavioural Processes, 140, 150–160. https://doi.org/10.1016/j.beproc.2017.05.004

Bonawitz, E., Denison, S., Gopnik, A., & Griffiths, T. L. (2014). Win-stay, lose-sample: A simple sequential algorithm for approximating Bayesian inference. Cognitive Psychology, 74, 35–65. https://doi.org/10.1016/j.cogpsych.2014.06.003

Bonnet, C., Fauquet Ars, J., & Estaún Ferrer, S. (2008). Reaction times as a measure of uncertainty. Psicothema, 20, 43–48. https://www.redalyc.org/articulo.oa?id=72720107

Briggs, A. M., Fisher, W. W., Greer, B. D., & Kimball, R. T. (2018). Prevalence of resurgence of destructive behavior when thinning reinforcement schedules during functional communication training. Journal of Applied Behavior Analysis, 51, 620-633. https://doi.org/10.1002/jaba.472

Cassotti, M., Houdé, O., & Moutier, S. (2011). Developmental changes of win-stay and loss-shift strategies in decision making, Child Neuropsychology, 17, 400–411. https://doi.org/10.1080/09297049.2010.547463 https:/www.redalyc.org/articulo.oa?id=72720107

Cook, R. G., & Rosen, H. A. (2010). Temporal control of internal states in pigeons. Psychonomic Bulletin & Review, 17, 915–922. https://doi.org/10.3758/PBR.17.6.915

Cowie, S. (2020). Some weaknesses of a response-strength account of reinforcer effects. European Journal of Behavior Analysis, 21, 348–363. https://doi.org/10.1080/15021149.2019.1685247

Cox, D. J., Bolívar, H. A., & Barlow, M. A. (2019). Multiple control responses and resurgence of human behavior. Behavioural Processes, 159, 93–99. https://doi.org/10.1016/j.beproc.2018.12.003

Craig, A. R., Sullivan, W. E., Derrenbacker, K., Rimal, A., DeRosa, N. M., & Roane, H. S (2020). An evaluation of resurgence in mice. Learning and Motivation, 72, Article 101671. https://doi.org/10.1016/j.lmot.2020.101671

da Silva, S. P., Cançado, C. R., & Lattal, K. A. (2014). Resurgence in Siamese fighting fish, Betta splendens. Behavioural Processes, 103, 315–319. https://doi.org/10.1016/j.beproc.2014.01.004

Davison, M., & Cowie, S. (2019). Timing or counting? Control by contingency reversals at fixed times or numbers of responses. Journal of Experimental Psychology: Animal Learning and Cognition, 45, 222–241. https://doi.org/10.1037/xan0000201

DeHart, W. B., & Kaplan, B. A. (2019). Applying mixed-effects modeling to single-subject designs: An introduction. Journal of the Experimental Analysis of Behavior, 111, 192–206. https://doi.org/10.1002/jeab.507

Doughty, S. S., Anderson, C. M., Doughty, A. H., Williams, D. C., & Saunders, K. J. (2007). Discriminative control of punished stereotyped behavior in humans. Journal of the Experimental Analysis of Behavior, 87, 325–336. https://doi.org/10.1901/jeab.2007.39-05

Eckerman, D. A., & Lanson, R. N. (1969). Variability of response location for pigeons responding under continuous reinforcement, intermittent reinforcement, and extinction. Journal of the Experimental Analysis of Behavior, 12, 73–80. https://doi.org/10.1901/jeab.1969.12-73

Flaherty, C. F. (1999). Incentive relativity. Cambridge University Press.

Gallagher, S., & Alsop, B. (2001). Effects of response disparity on stimulus and reinforcer control in human detection tasks. Journal of the experimental analysis of behavior, 75, 183–203. https://doi.org/10.1901/jeab.2001.75-183

Godfrey, R., & Davison, M. (1998). Effects of varying sample- and choice-stimulus disparity on symbolic matching-to-sample performance. Journal of the Experimental Analysis of Behavior, 69, 311–326. https://doi.org/10.1901/jeab.1998.69-311

Grondin, S., Ouellet, B., & Roussel, M.-È. (2004). Benefits and limits of explicit counting for discriminating temporal intervals. Canadian Journal of Experimental Psychology, 58, 1–12. https://doi.org/10.1037/h0087436

Gutiérrez-Roig, M., Segura, C., Duch, J., & Perelló, J. (2016). Market imitation and win-stay lose-shift strategies emerge as unintended patterns in market direction guesses. PLOS ONE, 11, Article e0159078. https://doi.org/10.1371/journal.pone.0159078

Ho, T., Bai, J. Y. H., Keevy, M., & Podlesnik, C. A. (2018). Resurgence when challenging alternative behavior with progressive ratios in children and pigeons. Journal of the Experimental Analysis of Behavior, 110, 474– 499. https://doi.org/10.1002/jeab.474

Izquierdo, A., Brigman, J. L., Radke, A. K., Rudebeck, P. H., & Holmes, A. (2017). The neural basis of reversal learning: An updated perspective. Neuroscience, 345, 12–26. https://doi.org/10.1016/j.neuroscience.2016.03.021

Kahneman, D. (2013). Thinking, fast and slow (Reprint ed.). Farrar, Straus and Giroux.

Kloskowski, J. (2021). Win-stay/lose-switch, prospecting-based settlement strategy may not be adaptive under rapid environmental change. Scientific Reports, 11, 570. https://doi.org/10.1038/s41598-020-79942-3.

Konovalov, A., & Krajbich, I. (2019). Revealed strength of preference: Inference from response times. Judgement and Decision Making, 14, 381–394.

Kuroda, T., Mizutani, Y., Cançado, C. R. X., & Podlesnik, C. A. (2017a). Operant models of relapse in zebrafish (Danio rerio): Resurgence, renewal, and reinstatement. Behavioural Brain Research, 335, 215–222. https://doi.org/10.1016/j.bbr.2017.08.023

Kuroda, T., Mizutani, Y., Cançado, C. R. X., & Podlesnik, C. A. (2017b). Reversal learning and resurgence of operant behavior in zebrafish (Danio rerio). Behavioural Processes, 142, 79–83. https://doi.org/10.1016/j.beproc.2017.06.004

Laschober, M., Mundry, R., Huber, L., & Schwing, R. (2021). Kea (Nestor notabilis) show flexibility and individuality in within-session reversal learning tasks. Animal Cognition, 24, 1339–1351. https://doi.org/10.1007/s10071-021-01524-1

Lattal, K. A. & Oliver, A. C. (2020). The control response in assessing resurgence: Useful or compromised tool? Journal of the Experimental Analysis of Behavior, 113, 77–86. https://doi.org/10.1002/jeab.570

Lattal, K. A., Cançado, C. R., Cook, J. E., Kincaid, S. L., Nighbor, T. D., & Oliver, A. C. (2017). On defining resurgence. Behavioural Processes, 141, 85–91. https://doi.org/10.1016/j.beproc.2017.04.018

Laude, J. R., Pattison, K. F., Rayburn-Reeves, R. M., Michler, D. M., & Zentall, T. R. (2016). Who are the real bird brains? Qualitative differences in behavioral flexibility between dogs (Canis familiaris) and pigeons (Columba livia). Animimal Cognition, 19, 163–169. https://doi.org/10.1007/s10071-015-0923-8

Lea, S., Chow, P., Leaver, L. A., & McLaren, I. (2020). Behavioral flexibility: A review, a model, and some exploratory tests. Learning & Behavior, 48, 173–187. https://doi.org/10.3758/s13420-020-00421-w

Lenth, R. V. (2021). emmeans: Estimated Marginal Means, aka Least-Squares Means. R package version 1.5.4. https://CRAN.R-project.org/package=emmeans

Liddon, C. J., Kelley, M. E., & Podlesnik, C. A. (2017). An animal model of differential reinforcement of alternative behavior. Learning and Motivation, 58, 48–58. https://doi.org/10.1016/j.lmot.2017.04.001

McMillan, N., & Roberts, W. A. (2012). Pigeons make errors as a result of interval timing in a visual, but not a visual-spatial, midsession reversal task. Journal of Experimental Psychology: Animal Behavior Processes, 38, 440–445. https://doi.org/10.1037/a0030192

McMillan N., & Spetch, M. L. (2019). Anticipation of a midsession reversal in humans. Behavioural Processes, 159, 60–64. https://doi.org/10.1016/j.beproc.2018.12.016.

McMillan, N., Kirk, C. R., & Roberts, W. A. (2014). Pigeon and rat performance in the midsession reversal procedure depends upon cue dimensionality. Journal of Comparative Psychology, 128, 357–366. https://doi.org/10.1037/a0036562

Mellgren, R. L., & Elsmore, T. F. (1991). Extinction of operant behavior: An analysis based on foraging considerations. Animal Learning & Behavior, 19, 317–325. https://doi.org/10.3758/BF03197892

Muething, C., Pavlov, A., Call, N., Ringdahl, J., & Gillespie, S. (2021). Prevalence of resurgence during thinning of multiple schedules of reinforcement following functional communication training. Journal of Applied Behavior Analysis, 54, 813–823. https://doi.org/10.1002/jaba.791

Mulick, J. A., Leitenberg, H., & Rawson, R. A. (1976). Alternative response training, differential reinforcement of other behavior, and extinction in squirrel monkeys (Saimiri sciureus). Journal of the Experimental Analysis of Behavior, 25, 311–320. https://doi.org/10.1901/jeab.1976.25-311

Neuringer, A., & Jensen, G. (2013). Operant variability. In G. J. Madden, W. V. Dube, T. D. Hackenberg, G. P. Hanley, & K. A. Lattal (Eds.), APA handbook of behavior analysis, Vol. 1. Methods and principles (pp. 513–546). American Psychological Association. https://doi.org/10.1037/13937-022

Nowak, M., & Sigmund, K. (1993). A strategy of win-stay, lose-shift that outperforms tit-for-tat in the Prisoner’s Dilemma game. Nature, 364, 56–58. https://doi.org/10.1038/364056a0

Otto, A. R., Taylor, E. G., & Markman, A. B. (2011). There are at least two kinds of probability matching: Evidence from a secondary task. Cognition, 118, 274–279. https://doi.org/10.1016/j.cognition.2010.11.009

Podlesnik, C. A., & Shahan, T. A. (2009). Behavioral momentum and relapse of extinguished operant responding. Learning & Behavior, 37, 357-364. https://doi.org/10.3758/LB.37.4.357

Podlesnik, C. A., & Shahan, T. A. (2010). Extinction, relapse, and behavioral momentum. Behavioural Processes, 84, 400–411. https://doi.org/10.1016/j.beproc.2010.02.001

Podlesnik, C. A., & Kelley, M. E. (2014). Resurgence: Response competition, stimulus control, and reinforcer control. Journal of the Experimental Analysis of Behavior, 102, 231–240. https://doi.org/10.1002/jeab.102

Podlesnik, C. A., Jimenez-Gomez, C., & Shahan, T. A. (2006). Resurgence of alcohol seeking produced by discontinuing non-drug reinforcement as an animal model of drug relapse. Behavioural Pharmacology, 17, 369–374. https://doi.org/10.1097/01.fbp.0000224385.09486.ba

Podlesnik, C. A., Kuroda, T., Jimenez-Gomez, C., Abreu-Rodrigues, J., Cançado, C. R. X., Blackman, A. L., Silverman, K., Villegas-Barker, J., Galbato, M., & Teixeira, I. S. C. (2019). Resurgence is greater following a return to the training context than remaining in the extinction context. Journal of the Experimental Analysis of Behavior, 111, 416–435. https://doi.org/10.1002/jeab.505

Podlesnik, C. A., Ritchey, C. M., & Kuroda, T. (2020). Repeated resurgence with and without a context change. Behavioural Processes, 174, 104–105. https://doi.org/10.1016/j.beproc.2020.104105

R Core Team. (2021). R: A language and environment for statistical computing [Computer software]. R Foundation for Statistical Computing. https://www.R-project.org/

Rayburn-Reeves, R. M., & Cook, R. G. (2016). The organization of behavior over time: Insights from midsession reversal. Comparative Cognition and Behavior Reviews, 11, 103–125. https://doi.org/10.3819/ccbr.2016.110006

Rayburn-Reeves, R. M., Molet, M., Zentall, T. R. (2011). Simultaneous discrimination reversal learning in pigeons and humans: Anticipatory and perseverative errors. Learning & Behavior, 39, 125–137. https://doi.org/10.3758/s13420-010-0011-5

Rayburn-Reeves, R. M., Stagner, J. P., Kirk, C. R. & Zentall, T. R. (2013). Reversal learning in rats (Rattus norvegicus) and pigeons (Columba livia): Qualitative differences in behavioral flexibility. Journal of Comparative Psychology, 127, 202–211. https://doi.org/10.1037/a0026311

Rayburn-Reeves, R. M., Qadri, M. A. J., Brooks, D. I., Keller, A. M., & Cook, R. G. (2017). Dynamic cue use in pigeon mid-session reversal. Behavioural Processes, 137, 53–63. https://doi.org/10.1016/j.beproc.2016.09.002

Robinson, T. P., & Kelley, M. E. (2020). Renewal and resurgence phenomena generalize to Amazon's Mechanical Turk. Journal of the Experimental Analysis of Behavior, 113, 206–213. https://doi.org/10.1002/jeab.57

Santos, C., Soares, C., Vasconcelos, M., & Machado, A. (2019). The effect of reinforcement probability on time discrimination in the midsession reversal task. Journal of the Experimental Analysis of Behavior, 111, 371–386. https://doi.org/10.1002/jeab.513

Shahan, T. A., & Chase, P. N. (2002). Novelty, stimulus control, and operant variability. The Behavior Analyst, 25, 175–190. https://doi.org/10.1007/BF03392056

Shahan, T. A., & Craig, A. R. (2017). Resurgence as choice. Behavioural Processes, 141, 100– 127. https://doi.org/10.1016/j.beproc.2016.10.006

Shvarts, S., Jimenez-Gomez, C., Bai, J. Y. H., Thomas, R. R., Oskam, J. J., & Podlesnik, C. A. (2020). Examining stimuli paired with alternative reinforcement to mitigate resurgence in children diagnosed with autism spectrum disorder and pigeons. Journal of the Experimental Analysis of Behavior, 113, 214–231. https://doi.org/10.1002/jeab.575

Simon, C., Bernardy, J. L., & Cowie, S. (2020). On the “strength” of behavior. Perspectives on Behavior Science, 43, 677–696. https://doi.org/10.1007/s40614-020-00269-5

Smith, A. P., Zentall, T. R., & Kacelnik, A. (2018). Midsession reversal task with pigeons: Parallel processing of alternatives explains choices. Journal of Experimental Psychology: Animal Learning and Cognition, 44, 272–279. https://doi.org/10.1037/xan0000180

Stokes, P. D. (1995). Learned variability. Animal Learning & Behavior, 23, 164–176. https://doi.org/10.3758/BF03199931

Sweeney, M. M., & Shahan, T. A. (2013). Effects of high, low, and thinning rates of alternative reinforcement on response elimination and resurgence. Journal of the Experimental Analysis of Behavior, 100, 102–116. https://doi.org/10.1002/jeab.26

Tamura, K., & Masuda, N. (2015). Win-stay lose-shift strategy in formation changes in football. EPJ Data Science, 4, 1–19. https://doi.org/10.1140/epjds/s13688-015-0045-1

Wathen, S. N., & Podlesnik, C. A. (2018). Laboratory models of treatment relapse and mitigation techniques. Behavior Analysis: Research and Practice, 18, 362–387. https://doi.org/10.1037/bar0000119

Wedekind, C., & Milinski, M. (1996). Human cooperation in the simultaneous and the alternating Prisoner’s Dilemma: Pavlov versus generous tit-for-tat. Proceedings of the National Academy of Sciences, 93, 2686–2689. https://doi.org/10.1073/pnas.93.7.2686

Williams, B. A. (1972). Probability learning as a function of momentary reinforcement probability. Journal of the Experimental Analysis of Behavior, 17, 363–368.

Williams, C. L., & St. Peter, C. C. (2020). Resurgence of previously taught academic responses. Journal of the Experimental Analysis of Behavior, 113, 232–250. https://doi.org/10.1002/jeab.572

Worthy, D. A., & Maddox, W. T. (2012). Age-based differences in strategy use in choice tasks. Frontiers in Neuroscience, 5, 145. https://doi.org/10.3389/fnins.2011.00145

Worthy, D. A., Otto, A. R., & Maddox, W. T. (2012). Working-memory load and temporal myopia in dynamic decision making. Journal of Experimental Psychology: Learning, Memory, and Cognition, 38, 1640–1658. https://doi.org/10.1037/a0028146

Worthy, D. A., Hawthorne, M. J., & Otto, A. R. (2013). Heterogeneity of strategy use in the Iowa gambling task: A comparison of win-stay/lose-shift and reinforcement learning models. Psychonomic Bulletin & Review, 20, 364–371. https://doi.org/10.3758/s13423-012-0324-9

Zentall, T. R. (2020). The midsession reversal task: A theoretical analysis. Learning & Behavior, 48, 195–207. https://doi.org/10.3758/s13420-020-00423-8

Zentall, T. R., Andrews, D. M., Case, J. P., & Peng, D. N. (2019a). Less information results in better midsession reversal accuracy by pigeons. Journal of Experimental Psychology: Animal Learning and Cognition, 45, 422–430. https://doi.org/10.1037/xan0000215

Zentall, T. R., Peng, D. N., House, D. C., & Yadav, R. (2019b). Midsession reversal learning by pigeons: Effect on accuracy of increasing the number of stimuli associated with one of the alternatives. Learning & Behavior, 47, 326–333. https://doi.org/10.3758/s13420-019-00390-9

Zhang, H., Moisan, F., & Gonzalez, C. (2021). Paper-rock-scissors: An exploration of the dynamics of players’ strategies. Proceedings of the Human Factors and Ergonomics Society Annual Meeting, 64, 268–272. https://doi.org/10.1177/1071181320641063

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

ESM 1

(PDF 429 kb)

Rights and permissions

About this article

Cite this article

Ritchey, C.M., Gilroy, S.P., Kuroda, T. et al. Assessing human performance during contingency changes and extinction tests in reversal-learning tasks. Learn Behav 50, 494–508 (2022). https://doi.org/10.3758/s13420-022-00513-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13420-022-00513-9