Abstract

Securing Neural Network (NN) computations through the use of Fully Homomorphic Encryption (FHE) is the subject of a growing interest in both communities. Among different possible approaches to that topic, our work focuses on applying FHE to hide the model of a neural network-based system in the case of a plain input. In this paper, using the TFHE homomorphic encryption scheme, we propose an efficient method for an \(\mathsf {argmin}\) computation on an arbitrary number of encrypted inputs and an asymptotically faster - though levelled - equivalent scheme. Using these schemes and a unifying framework for LWE-based homomorphic encryption schemes (Chimera), we implement a practically efficient, homomorphic speaker recognition system using the embedding-based neural net system VGGVox. This work can be applied to all other similar Euclidean embedding-based recognition systems (e.g. Google’s FaceNet). While maintaining the best-in-class classification rate of the VGGVox system, we demonstrate a speaker-recognition system that can classify a speech sample as coming from one out of 50 hidden speaker models in less than one minute.

S. Carpov—This work was done in part while this author was at CEA, LIST.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

The way these samples are selected may depend on some meta-data and is beyond the scope of this paper.

- 2.

- 3.

We implicitly write the possible values of \(\mu \) and the output value \(\mu _0\) as members of the torus space \(\mathbb {T}\). Alternatively, we also refer to 1/b as the value 1. This is arbitrary but allows us to represent the bootstrap operation very intuitively in Fig. 2.

- 4.

- 5.

This greatly reduces the stress on the parameters induced from the \(\mathsf {MUX}\) gate.

- 6.

This time where \(b = 4\) and where the 0 and the 1 outputs are swapped.

- 7.

References

Albrecht, M., Player, R., Scott, S.: On the concrete hardness of learning with errors. J. Math. Cryptol., ePrint Archive 2015/046 (2015)

Ball, M., Carmer, B., Malkin, T., Rosulek, M., Schimanski, N.: Garbled neural networks are practical. Cryptology ePrint Archive, Report 2019/338 (2019)

Bergamaschi, F., Halevi, S., Halevi, T.T., Hunt, H.: Homomorphic training of 30,000 logistic regression models. In: Deng, R.H., Gauthier-Umaña, V., Ochoa, M., Yung, M. (eds.) ACNS 2019. LNCS, vol. 11464, pp. 592–611. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-21568-2_29

Boura, C., Gama, N., Georgieva, M.: Chimera: a unified framework for B/FV, TFHE and HEAAN fully homomorphic encryption and predictions for deep learning. Cryptology ePrint Archive, Report 2018/758 (2018)

Bourse, F., Minelli, M., Minihold, M., Paillier, P.: Fast homomorphic evaluation of deep discretized neural networks. In: Shacham, H., Boldyreva, A. (eds.) CRYPTO 2018. LNCS, vol. 10993, pp. 483–512. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-96878-0_17

Brakerski, Z., Gentry, C., Vaikuntanathan, V.: Fully homomorphic encryption without bootstrapping. Cryptology ePrint Archive, Report 2011/277 (2011)

Carpov, S., Gama, N., Georgieva, M., Troncoso-Pastoriza, J.R.: Privacy-preserving semi-parallel logistic regression training with fully homomorphic encryption. Cryptology ePrint Archive, Report 2019/101 (2019)

Chabanne, H., Lescuyer, R., Milgram, J., Morel, C., Prouff, E.: Recognition Over Encrypted Faces. In: Renault, É., Boumerdassi, S., Bouzefrane, S. (eds.) MSPN 2018. LNCS, vol. 11005. Springer, Heidelberg (2019). https://doi.org/10.1007/978-3-030-03101-5_16

Chabanne, H., de Wargny, A., Milgram, J., Morel, C., Prouff, E.: Privacy-preserving classification on deep neural network. Cryptology ePrint Archive, Report 2017/035 (2017)

Chen, H., Chillotti, I., Dong, Y., Poburinnaya, O., Razenshteyn, I., Riazi, M.S.: SANNS: scaling up secure approximate k-nearest neighbors search. Cryptology ePrint Archive, Report 2019/359 (2019)

Chen, H., Laine, K., Player, R.: Simple encrypted arithmetic library - seal v2.1 (2017)

Cheon, J.H., Kim, D., Park, J.H.: Towards a practical clustering analysis over encrypted data. IACR Cryptology ePrint Archive (2019)

Chillotti, I., Gama, N., Georgieva, M., Izabachène, M.: Faster fully homomorphic encryption: Bootstrapping in less than 0.1 seconds. Cryptology ePrint Archive, Report 2016/870 (2016)

Chillotti, I., Gama, N., Georgieva, M., Izabachène, M.: Improving TFHE: faster packed homomorphic operations and efficient circuit bootstrapping. IACR Cryptology ePrint Archive, p. 430 (2017)

Chillotti, I., Gama, N., Georgieva, M., Izabachène, M.: TFHE: fast fully homomorphic encryption library, August 2016. https://tfhe.github.io/tfhe/

Chung, J.S., Nagrani, A., Zisserman, A.: VoxCeleb2: deep speaker recognition. CoRR (2018)

Demmler, D., Schneider, T., Zohner, M.: ABY - a framework for efficient mixed-protocol secure two-party computation (2015)

Erkin, Z., Franz, M., Guajardo, J., Katzenbeisser, S., Lagendijk, I., Toft, T.: Privacy-preserving face recognition. In: Goldberg, I., Atallah, M.J. (eds.) PETS 2009. LNCS, vol. 5672, pp. 235–253. Springer, Heidelberg (2009). https://doi.org/10.1007/978-3-642-03168-7_14

Failla, P., Barni, M., Catalano, D., di Raimondo, M., Labati, R., Bianchi, T.: Privacy- preserving fingercode authentication (2010)

Fan, J., Vercauteren, F.: Somewhat practical fully homomorphic encryption. Cryptology ePrint Archive, Report 2012/144 (2012)

Gentry, C.: Fully homomorphic encryption using ideal lattices. In: Proceedings of the Forty-first Annual ACM Symposium on Theory of Computing, STOC 2009 (2009)

Gentry, C., Sahai, A., Waters, B.: Homomorphic encryption from learning with errors: conceptually-simpler, asymptotically-faster, attribute-based. Cryptology ePrint Archive, Report 2013/340 (2013)

Goodfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press, Cambridge (2016)

Halevi, S., Shoup, V.: Algorithms in HElib. In: Garay, J.A., Gennaro, R. (eds.) CRYPTO 2014. LNCS, vol. 8616, pp. 554–571. Springer, Heidelberg (2014). https://doi.org/10.1007/978-3-662-44371-2_31

Huang, Y., Malka, L., Evans, D., Katz, J.: Efficient privacy-preserving biometric identification. In: NDSS (2011)

Izabachène, M., Sirdey, R., Zuber, M.: Practical fully homomorphic encryption for fully masked neural networks. In: Mu, Y., Deng, R.H., Huang, X. (eds.) CANS 2019. LNCS, vol. 11829, pp. 24–36. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-31578-8_2

Jäschke, A., Armknecht, F.: Unsupervised machine learning on encrypted data. IACR Cryptology ePrint Archive (2018)

Kim, M., Song, Y., Wang, S., Xia, Y., Jiang, X.: Secure logistic regression based on homomorphic encryption: design and evaluation. JMIR Med. Inf. 6, e19 (2018)

Lyubashevsky, V., Peikert, C., Regev, O.: On ideal lattices and learning with errors over rings. In: Gilbert, H. (ed.) EUROCRYPT 2010. LNCS, vol. 6110, pp. 1–23. Springer, Heidelberg (2010). https://doi.org/10.1007/978-3-642-13190-5_1

Nagrani, A., Chung, J.S., Zisserman, A.: VoxCeleb: a large-scale speaker identification dataset (2017)

Nandakumar, K., Ratha, N.K., Pankanti, S., Halevi, S.: Towards deep neural network training on encrypted data. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pp. 40–48 (2019)

Regev, O.: On lattices, learning with errors, random linear codes, and cryptography. In: Proceedings of the 37th Annual ACM Symposium on Theory of Computing. ACM (2005)

Rivest, R.L., Adleman, L., Dertouzos, M.L.: On data banks and privacy homomorphisms. In: Foundations of Secure Computation, pp. 169–179. Academia Press (1978)

Rouhani, B.D., Riazi, M.S., Koushanfar, F.: DeepSecure: scalable provably-secure deep learning. CoRR (2017)

Sadeghi, A.-R., Schneider, T., Wehrenberg, I.: Efficient privacy-preserving face recognition. In: Lee, D., Hong, S. (eds.) ICISC 2009. LNCS, vol. 5984, pp. 229–244. Springer, Heidelberg (2010). https://doi.org/10.1007/978-3-642-14423-3_16

Schroff, F., Kalenichenko, D., Philbin, J.: FaceNet: a unified embedding for face recognition and clustering. CoRR (2015)

Microsoft SEAL (release 3.2). Microsoft Research, Redmond, WA, February 2019. https://github.com/Microsoft/SEAL

Shaul, H., Feldman, D., Rus, D.: Scalable secure computation of statistical functions with applications to k-nearest neighbors. CoRR (2018)

Xie, P., Bilenko, M., Finley, T., Gilad-Bachrach, R., Lauter, K.E., Naehrig, M.: Crypto-nets: neural networks over encrypted data. CoRR (2014)

Yao, A.C.C.: How to generate and exchange secrets. In: Proceedings of the 27th Annual Symposium on Foundations of Computer Science, SFCS 1986, IEEE Computer Society (1986)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

A Parameters

A Parameters

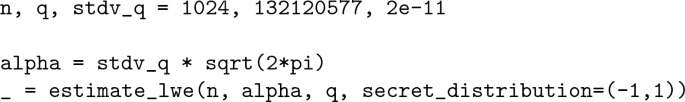

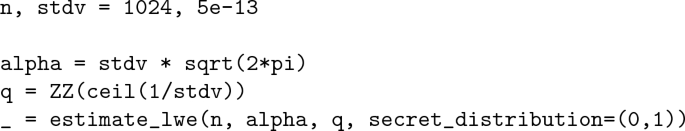

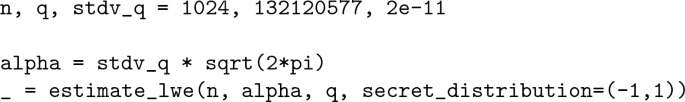

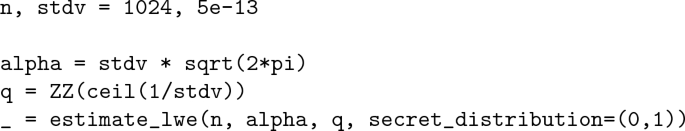

In this appendix we present the security parameters we use for both FV and TFHE. This corresponds to Tables 1, 2 and 3. The security of chosen parameters (as an example for the first parameters from Table 1) was asserted using the following scripts (lwe-estimator commit a276755):

-

FV:

-

TFHE:

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Zuber, M., Carpov, S., Sirdey, R. (2020). Towards Real-Time Hidden Speaker Recognition by Means of Fully Homomorphic Encryption. In: Meng, W., Gollmann, D., Jensen, C.D., Zhou, J. (eds) Information and Communications Security. ICICS 2020. Lecture Notes in Computer Science(), vol 12282. Springer, Cham. https://doi.org/10.1007/978-3-030-61078-4_23

Download citation

DOI: https://doi.org/10.1007/978-3-030-61078-4_23

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-61077-7

Online ISBN: 978-3-030-61078-4

eBook Packages: Computer ScienceComputer Science (R0)