Abstract

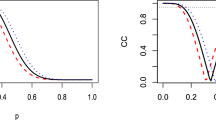

We provide analytical expressions governing changes to the bias and variance of the lookup table estimators provided by various Monte Carlo and temporal difference value estimation algorithms with offline updates over trials in absorbing Markov reward processes. We have used these expressions to develop software that serves as an analysis tool: given a complete description of a Markov reward process, it rapidly yields an exact mean-square-error curve, the curve one would get from averaging together sample mean-square-error curves from an infinite number of learning trials on the given problem. We use our analysis tool to illustrate classes of mean-square-error curve behavior in a variety of example reward processes, and we show that although the various temporal difference algorithms are quite sensitive to the choice of step-size and eligibility-trace parameters, there are values of these parameters that make them similarly competent, and generally good.

Article PDF

Similar content being viewed by others

References

Barnard, E. (1993). Temporal-difference methods and Markov models. IEEE Transactions on Systems, Man, and Cybernetics, 23(2), 357-365.

Barto, A. G. & Duff, M. (1994). Monte Carlo matrix inversion and reinforcement learning. In Advances in Neural Information Processing Systems 6, pages 687-694, San Mateo, CA. Morgan Kaufmann.

Barto, A. G., Sutton, R. S., & Anderson, C. W. (1983). Neuronlike elements that can solve difficult learning control problems. IEEE Transactions on Systems, Man, and Cybernetics, 13, 835-846.

Bucklew, J. A. (1990). Large Deviation Techniques in Decision, Simulation and Estimation. New York: Wiley-Interscience.

Dayan, P. (1992). The convergence of TD(λ) for general λ. Machine Learning, 8(3/4), 341-362.

Dayan, P. & Sejnowski, T. (1994). TD(λ) converges with probability 1. Machine Learning, 14, 295-301.

Haussler, D., Kearns, M., Seung, H. S., & Tishby, N. (1994). Rigorous learning curve bounds from statistical mechanics. In Proceedings of the 7th Annual ACM Workshop on Computational Learning Theory, pages 76-87, San Mateo, CA. Morgan Kauffman.

Jaakkola, T., Jordan, M. I., & Singh, S. (1994). On the convergence of stochastic iterative dynamic programming algorithms. Neural Computation, 6(6), 1185-1201.

Saul, L. K.& Singh, S. (1996). Learning curves bounds for Markov decision processes with undiscounted rewards. In Proceedings of COLT.

Singh, S. & Sutton, R. S. (1996). Reinforcement learning with replacing eligibility traces. Machine Learning, Vol. 22, 123-158.

Sutton, R. S. (1988). Learning to predict by the methods of temporal differences. Machine Learning, 3, 9-44.

Tsitsiklis, J. (1994). Asynchronous stochastic approximation and Q-learning. Machine Learning, 16(3), 185-202.

Wasow, W. R. (1952). A note on the inversion of matrices by random walks. Math. Tables Other Aids Comput., 6, 78-81.

Watkins, C. J. C. H. (1989). Learning from Delayed Rewards. Ph.D Thesis, Cambridge Univ., Cambridge, England.

Widrow, B. & Stearns, S. D. (1985). Adaptive Signal Processing. Englewood Cliffs, NJ: Prentice-Hall.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Singh, S., Dayan, P. Analytical Mean Squared Error Curves for Temporal Difference Learning. Machine Learning 32, 5–40 (1998). https://doi.org/10.1023/A:1007495401240

Issue Date:

DOI: https://doi.org/10.1023/A:1007495401240