Abstract

Background

Decision-analytic cost-effectiveness (CE) models combine many different parameters like transition probabilities, event probabilities, utilities and costs, which are often obtained after meta-analysis. The method of meta-analysis may affect the CE estimate.

Aim

Our aim was to perform a simulation study that compares the performance of different methods of meta-analysis, especially with respect to model-based health economic (HE) outcomes.

Methods

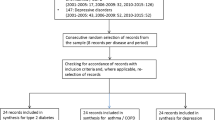

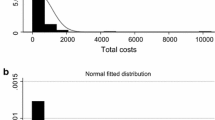

A reference patient population of 50,000 was simulated from which sets of samples were drawn. Each sample drawn represented a clinical trial comparing two fictitious interventions. In several scenarios, the heterogeneity between these trials was varied, by drawing one or more of the trials from predefined subpopulations. Parameter estimates from these trials were combined using frequentist fixed (FFE) and random effects (FRE), and Bayesian fixed (BFE) and random effects (BRE) meta-analysis. The pooled parameter estimates were entered into a probabilistic cost-effectiveness Markov model. The four methods of meta-analysis resulted in different parameter estimates and HE outcomes, which were compared with the true values in the reference population. Performance statistics were: (1) the percentage of repetitions that the confidence interval of the probabilistic sensitivity analysis covers the true value (coverage), (2) the difference between the estimated and true value (bias), (3) the mean absolute value of the bias (MAD) and (4) the percentage of repetitions that result in a statistically significant difference between the two interventions (statistical power). As the differences between methods could be due to chance, we repeated every step of the analysis 1,000 times to study whether differences were systematic.

Results

FFE, FRE and BFE lead to different parameter estimates, but, when entered into the model, they do not lead to large differences in the point estimates of the HE outcomes, even in scenarios where we built in heterogeneity. Random effects methods do not necessarily reduce bias when heterogeneity is added to the trials, and may even increase bias in certain situations. BRE tends to overestimate uncertainty reflected in the CE acceptability curve.

Conclusion

FFE, FRE and BFE lead to comparable HE outcomes. BRE tends to overestimate uncertainty. Based on this study, we recommend FRE as the preferred method of meta-analysis.

Similar content being viewed by others

References

NZa. Beleidsregel Dure Geneesmiddelen [Policy rule Expensive Drugs] (BR-CU-2017). 2011. http://www.nza.nl/regelgeving/beleidsregels/ziekenhuiszorg/BR-CU-2017. Accessed 15 June 2011.

Cooper NJ, Sutton AJ, Abrams KR, Turner D, Wailoo A. Comprehensive decision analytical modelling in economic evaluation: a Bayesian approach. Health Econ. 2004;13(3):203–26.

Ades AE, Sculpher M, Sutton A, Abrams K, Cooper N, Welton N, et al. Bayesian methods for evidence synthesis in cost-effectiveness analysis. Pharmacoeconomics. 2006;24(1):1–19.

Riley RD, Simmonds MC, Look MP. Evidence synthesis combining individual patient data and aggregate data: a systematic review identified current practice and possible methods. J Clin Epidemiol. 2007;60(5):431–9.

Brockwell SE, Gordon IR. A comparison of statistical methods for meta-analysis. Stat Med. 2001;20(6):825–40.

Sutton AJ, Abrams KR. Bayesian methods in meta-analysis and evidence synthesis. 2001;10(4):277–303.

Sutton AJ, Higgins JP. Recent developments in meta-analysis. Stat Med. 2008;27(5):625–50.

Higgins JPT, Green S, editors. Cochrane handbook for systematic reviews of interventions 5.0.2, updated September 2009. Available at http://www.cochrane-handbook.org/.

Oppe M, Al M, Rutten-van Molken M. Comparing methods of data synthesis: re-estimating parameters of an existing probabilistic cost-effectiveness model. Pharmacoeconomics. 2011;29(3):239–50.

Higgins JP, Thompson SG, Deeks JJ, Altman DG. Measuring inconsistency in meta-analyses. BMJ. 2003;327(7414):557–60.

DerSimonian R, Laird N. Meta-analysis in clinical trials. Control Clin Trials. 1986;7(3):177–88.

Higgins JP, Thompson SG. Quantifying heterogeneity in a meta-analysis. Stat Med. 2002;21(11):1539–58.

Gelman A, Carlin JB, Stern HS, Rubin DB. Bayesian data analysis. London: Chapman & Hall; 1995.

Carlin BP, Louis TA. Bayes and empirical Bayes methods for data analysis. London: Chapman & Hall; 1996.

Jaynes E. Confidence intervals vs Bayesian intervals. In: Harper W, Hooker CA, editors. Foundations of probability theory, statistical inference, and statistical theories of science. Dordrecht: D Reidel; 1976. p. 175.

O’Hagan A, Luce B. A primer on Bayesian statistics in health economics and outcomes research. Sheffield: Centre for Bayesian Statistics in Health Economics; 2003.

Lambert PC, Sutton AJ, Burton PR, Abrams KR, Jones DR. How vague is vague? A simulation study of the impact of the use of vague prior distributions in MCMC using WinBUGS. Stat Med. 2005;24(15):2401–28.

Song F, Altman DG, Glenny AM, Deeks JJ. Validity of indirect comparison for estimating efficacy of competing interventions: empirical evidence from published meta-analyses. BMJ. 2003;326(7387):472.

Strassmann R, Bausch B, Spaar A, Kleijnen J, Braendli O, Puhan MA. Smoking cessation interventions in COPD: a network meta-analysis of randomised trials. Eur Respir J. 2009;34(3):634–40.

Puhan MA, Bachmann LM, Kleijnen J, Ter Riet G, Kessels AG. Inhaled drugs to reduce exacerbations in patients with chronic obstructive pulmonary disease: a network meta-analysis. BMC Med. 2009;14(7):2.

Lu G, Ades AE. Assessing evidence inconsistency in mixed treatment comparisons. J Am Stat Assoc. 2006;101:447–59.

Acknowledgments

The authors wish to acknowledge the support of Prof. Lidia Arends, Ph.D. and Prof. Jos Kleijnen, Ph.D. for their valuable contributions to the design and execution of this study. We also thank the Netherlands Organization for Health Research and Development (ZonMW), which sponsored this study under project number 152002002. The authors would also like to thank four anonymous reviewers for their valuable comments on the final manuscript.

Author Contributions

The last three authors initiated and designed the study. The first author performed the simulation study and wrote the manuscript. The last three authors contributed to writing, reviewing and approving the manuscript. All authors contributed to analyzing and interpreting the outcomes of the study.

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Vemer, P., Al, M.J., Oppe, M. et al. A Choice That Matters?. PharmacoEconomics 31, 719–730 (2013). https://doi.org/10.1007/s40273-013-0067-0

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40273-013-0067-0