Abstract

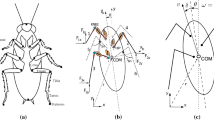

From matters of survival like chasing prey, to games like football, the problem of intercepting a target that moves in the horizontal plane is ubiquitous in human and animal locomotion. Recent data show that walking humans turn onto a straight path that leads a moving target by a constant angle, with some transients in the target-heading angle. We test four control strategies against the human data: (1) pursuit, or nulling the target-heading angle β, (2) computing the required interception angle \({\hat{\beta},}\) (3) constant target-heading angle, or nulling change in the target-heading angle \({\dot{\beta},}\) and (4) constant bearing, or nulling change in the bearing direction of the target \({\dot{\psi},}\) which is equivalent to nulling change in the target-heading angle while factoring out the turning rate \({(\dot{\beta} - \dot{\phi}).}\) We show that human interception behavior is best accounted for by the constant bearing model, and that it is robust to noise in its input and parameters. The models are also evaluated for their performance with stationary targets, and implications for the informational basis and neural substrate of steering control are considered. The results extend a dynamical systems model of human locomotor behavior from static to changing environments.

Similar content being viewed by others

Notes

This assumes that the midline is coincident with the locomotor axis, but exceptions include a “crabbing” gait for terrestrial animals, a crosswind for aerial animals, and a crosscurrent for aquatic animals.

This assumes that the observer is not rotating. It follows from the basic law of optic flow (Nakayama and Loomis 1974) that the target’s angular velocity is proportional to the sine of the target-heading angle and inversely proportional to distance.

The use of an allocentric reference axis to define heading is for convenience of analysis. The perceptual input to the agent is the target-heading angle β = φ− ψ m .

We assume that v r is positive in the direction extending from the agent to the target, such that v r,a > 0 and v r,m < 0 in Fig. 1b

References

Bastin J, Calvin S, Montagne G (2006) Muscular proprioception contributes to the control of interceptive actions. J Exp Psychol Hum Percep Perf 32:964–972

Chapman S (1968) Catching a baseball. Am J Phys 53:849–855

Chardenon A, Montagne G, Buekers MJ, Laurent M (2002) The visual control of ball interception during human locomotion. Neurosci Lett 334:13–16

Chardenon A, Montagne G, Laurent M, Bootsma RJ (2004) The perceptual control of goal-directed locomotion: a common control architecture for interception and navigation? Exp Brain Res 158:100–108

Chardenon A, Montagne G, Laurent M, Bootsma RJ (2005) A robust solution for dealing with environmental changes in intercepting moving balls. J Mot Behav 37:52–64

Committeri G, Galati G, Paradis A, Pizzamiglio L, Berthoz A, Li Bihan D (2004) Reference frames for spatial cognition: different brain areas are involved in viewer-, object-, and landmark-centered judgments about object location. J Cogn Neurosci 16:1517–1535

Crowell JA, Banks MS, Shenoy KV, Andersen RA (1998) Visual self-motion perception during head turns. Nature Neurosci 1:732–737

Cutting JE, Vishton PM, Braren PA (1995) How we avoid collisions with stationary and moving obstacles. Psychol Rev 102:627–651

Duffy CJ (2004) The cortical analysis of optic flow. In: Chalupa L, Werner J (eds) The visual neurosciences, vol II. MIT, Cambridge, pp 1260–1261

Fajen BR, Warren WH (2003) Behavioral dynamics of steering, obstacle avoidance, and route selection. J Exp Psychol Hum Percept Perform 29:343–362

Fajen BR, Warren WH (2004) Visual guidance of intercepting a moving target on foot. Perception 33:689–715

Fajen BR, Warren WH, Temizer S, Kaelbling LP (2003) A dynamical model of visually-guided steering, obstacle avoidance, and route selection. Int J Comp Vis 54:13–34

Fink PW, Foo P, Warren WH (in press). Obstacle avoidance during walking in real and virtual environments. ACM Trans on Appl Percep

Field DT, Wilkie RM, Wann JP (2006) Neural systems for the perception of heading and visual control of steering. (submitted)

Galati G, Lobel E, Vallar G, Berthoz A, Luigi P, Le Bihan D (2000) The neural basis of egocentric and allocentric coding of space in humans: a functional magnetic resonance study. Exp Brain Res 133:156–164

Gibson JJ (1950) Perception of the visual world. Houghton-Mifflin, Boston

Harris JM, Bonas W (2002) Optic flow and scene structure do not always contribute to the control of human walking. Vis Res 42:1619–1626

Harris MG, Carre G (2001) Is optic flow used to guide walking while wearing a displacing prism? Perception 30:811–818

Hollands MA, Patla AE, Vickers JN (2002) “Look where you’re going!”: gaze behavior associated with maintaining and changing the direction of locomotion. Exp Brain Res 143:221–250

Israel I, Warren WH (2005) Vestibular, proprioceptive, and visual influences on the perception of orientation and self-motion in humans. In: Wiener SI, Taube JS (eds) Head direction cells and the neural mechanisms of spatial orientation. MIT, Cambridge, pp 347–381

Kelso JAS (1995) Dynamic patterns: the self-organization of brain and behavior. MIT, Cambridge

Kugler PN, Turvey MT (1987) Information, natural law, and the self-assembly of rhythmic movement. Erlbaum, Hillsdale

Lenoir M, Musch E, Janssens M, Thiery E, Uyttenhove J (1999a) Intercepting moving objects during self-motion. J Mot Behav 31:55–67

Lenoir M, Savelsbergh GJ, Musch E, Thiery E, Uyttenhove J, Janssens M (1999b) Intercepting moving objects during self-motion: Effects of environmental changes. Res Q Exerc Sport 70:349–360

Lenoir M, Musch E, Thiery E, Savelsbergh GJ (2002) Rate of change of angular bearing as the relevant property in a horizontal interception task during locomotion. J Motor Behav 34:385–401

Li L, Warren WH (2002) Retinal flow is sufficient for steering during simulated rotation. Psychol Sci 13:485–491

Llewellyn KR (1971) Visual guidance of locomotion. J Exp Psychol 91:245–261

Michaels CF (2001) Information and action in timing the punch of a falling ball. Quart J Exp Psychol 54A:69–93

Morrone MC, Tosetti M, Montanaro D, Fiorentini A, Cioni G, Burr DC (2000) A cortical area that responds specifically to optic flow revealed by fMRI. Nat Neurosci 3:1322–1328

Nakayama K, Loomis JM (1974) Optical velocity patterns, velocity sensitive neurons, and space perception: a hypothesis. Perception 3:63–80

Olberg RM, Worthington AH, Venator KR (2000) Prey pursuit and interception in dragonflies. J Comp Physiol A 186:155–162

Peuskens H, Sunaert S, Dupont P, van Hecks P, Orban, GA (2001) Human brain regions involved in heading estimation. J Neurosci 21:2451–2461

Raffi M, Siegel RM (2004) Multiple cortical representations of optic flow processing. In: Vaina LM, Beardsley SA, Rushton SK (eds) Optic flow and beyond. Kluwer, Dordrecht, pp 3–22

Reichardt W, Poggio T (1976) Visual control of orientation behavior in the fly: I. A quantitative analysis. Q Rev Biophys 9:311–375

Rushton SK, Harris JM, Lloyd M, Wann JP (1998) Guidance of locomotion on foot uses perceived target location rather than optic flow. Curr Biol 8:1191–1194

Rushton SK, Wen J, Allison RS (2002) Egocentric direction and the visual guidance of robot locomotion: background, theory, and implementation. Biologically motivated computer vision. In: Proceedings: lecture notes in computer science, vol 2525, pp 576–591

Schöner G, Dose M, Engels C (1995) Dynamics of behavior: theory and applications for autonomous robot architectures. Robot Auton Syst 16:213–245

Spiro JE (2001) Going with the (virtual) flow. Nat Neurosci 4:120

Telford L, Howard IP, Ohmi M (1995) Heading judgments during active and passive self-motion. Exp Brain Res 104:502–510

Turano KA, Yu D, Hao L, Hicks JC (2005) Optic-flow and egocentric-direction strategies in walking: central vs peripheral visual field. Vis Res 45:3117–3132

Vaina LM, Soloviev S (2004) Functional neuroanatomy of heading perception in humans. In: Vaina LM, Beardsley SA, Rushton SK (eds) Optic flow and beyond. Kluwer, Dordrecht, pp 109–137

Warren WH (2004) Optic flow. In: Chalupa L, Werner J (eds) The visual neurosciences, vol II. MIT, Cambridge, pp 1247–1259

Warren WH, Kay BA, Zosh WD, Duchon AP, Sahuc S (2001) Optic flow is used to control human walking. Nat Neurosci 4:213–216

Wilkie RM, Wann JP (2002) Driving as night falls: the contribution of retinal flow and visual direction to the control of steering. Curr Biol 12:2014–2017

Wilkie R, Wann J (2003) Controlling steering and judging heading: retinal flow, visual direction, and extra-retinal information. J Exp Psychol Hum Percept Perform 29:363–378

Wilkie R, Wann J (2005) The role of visual and nonvisual information in the control of locomotion. J Exp Psychol Hum Percept Perform 31:901–911

Wood RM, Harvey MA, Young CE, Beedie A, Wilson T (2000) Weighting to go with the flow? Curr Biol 10:R545–R546

Zhang T, Heuer HW, Britten KH (2004) Parietal area VIP neuronal responses to heading stimuli are encoded in head-centered coordinates. Neuron 42:993–1001

Acknowledgments

This research was supported by the National Eye Institute (EY10923), National Institute of Mental Health (K02 MH01353) and the National Science Foundation (NSF 9720327).

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

The appendix describes the methods used in the human experiments and the model simulations.

Human data

The human data were collected in the Virtual Environment Navigation Lab at Brown University (Spiro 2001; for details, see Fajen and Warren 2004). Eight volunteers walked in a 12 × 12 m2 area while wearing a head mounted display (Kaiser Proview 80, field of view 60° H × 40° V). Head position and orientation were recorded with an ultrasound/inertial tracking system (Intersense IS-900) at 60 Hz. The virtual environment was generated on a graphics workstation (SGI Onyx2 IR) and presented stereoscopically at 60 frames/s, with a latency of approximately 50–70 ms (3–4 frames). The target was a marble-textured cylinder (2.5 m tall, 0.1 m radius) that moved horizontally at a speed of 0.6 m/s. After the participant walked 1 m in a specified direction, the target appeared at a distance of 3 m along the z axis, either directly in front of the participant at 0° (Center condition) or 25° to the left of the participant’s initial heading (Side condition). It either moved rightward perpendicular to the initial heading (Cross condition), approached at an angle of 30° from the perpendicular (approach), or retreated at an angle of 30° (retreat). These conditions were mirrored left/right and the data collapsed. In the No Background condition, the target moved in empty black space; in the Background condition, the target moved in a room with random-textured floor, walls, and ceiling. There were 10 trials in each condition, blocked by Background and randomized within blocks. Head position in x and z was filtered (zero-lag, 0.6 Hz cutoff) and the direction of motion (φ) was computed for each pair of frames. Because the filter compresses data points near the end of the time series, there is an artifactual drop in speed and heading angle. So we truncated the last 500 ms of the filtered time series to eliminate these effects. The time series of target-heading angle (β) for each trial was normalized to a length of 25 data points by sub-sampling, and the mean time series was computed in each condition.

Model simulations

The method used to simulate each model will be illustrated using model #4 (null -\({\dot{\psi}_{m};}\) Eq. 5a, b). The agent’s angular acceleration is a function of the agent’s rate of rotation \({(\dot{\phi}),}\) the change in allocentric direction of the target \({(\dot{\psi}_{m}),}\) and the target distance \({d_{m}. \dot{\psi}_{m}}\) can be expressed as a function of the agent’s position (x a , z a ) and speed (v x,a, v z,a), and the target’s position (x m , z m ) and speed (v x,m, v z,m)

Likewise, d m can be expressed as a function of the agent’s position and the target’s position

Locomotion toward a moving target is thus represented as a 6D system, for to predict the agent’s future position we need to know its current heading (y 1 = ϕ), turning rate \({(y_{2} = \dot{\phi}),}\) and position (y 3 = x a , y 4 = z a ), as well as the position of the target (y 5 = x g ; y 6 = z m ) assuming that agent speed (v a ), target speed (v m ), and target direction of motion (γ) are given. Written as a system of first-order differential equations, the full constant bearing model is given by

simulations of Eq. 5a, b (as well as the set of equations corresponding to the other models) were performed in Matlab, using the ode45 integration routine. The model speed was constant at 1.29 m/s, equal to the mean maximum human walking speed during a trial. A run was terminated when the model came within 15 cm of the target, to prevent the target-heading angle from blowing up due to small positional errors near the target. We fit the model to the mean time series of target-heading angle in each condition by searching iteratively for the parameter values that minimized the error in β at each time step across all conditions, using a least-squares criterion. Goodness-of-fit was measured by calculating the rmse between the model β time series and the mean human β time series. We also report the r 2 based on a linear regression of the model and human β time series.

Rights and permissions

About this article

Cite this article

Fajen, B.R., Warren, W.H. Behavioral dynamics of intercepting a moving target. Exp Brain Res 180, 303–319 (2007). https://doi.org/10.1007/s00221-007-0859-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00221-007-0859-6