Abstract

Conjugate gradient methods have been extensively used to locate unconstrained minimum points of real-valued functions. At present, there are several readily implementable conjugate gradient algorithms that do not require exact line search and yet are shown to be superlinearly convergent. However, these existing algorithms usually require several trials to find an acceptable stepsize at each iteration, and their inexact line search can be very timeconsuming.

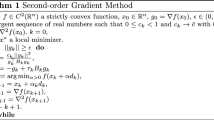

In this paper we present new readily implementable conjugate gradient algorithms that will eventually require only one trial stepsize to find an acceptable stepsize at each iteration.

Making usual continuity assumptions on the function being minimized, we have established the following properties of the proposed algorithms. Without any convexity assumptions on the function being minimized, the algorithms are globally convergent in the sense that every accumulation point of the generated sequences is a stationary point. Furthermore, when the generated sequences converge to local minimum points satisfying second-order sufficient conditions for optimality, the algorithms eventually demand only one trial stepsize at each iteration, and their rate of convergence isn-step superlinear andn-step quadratic.

Similar content being viewed by others

References

L. Armijo, “Minimization of functions having continuous partial derivatives”,Pacific Journal of Mathematics 16 (1966) 1–3.

A.I. Cohen, “Rate of convergence of several conjugate gradient algorithms”,SIAM Journal on Numerical Analysis 9 (1972) 248–259.

L.C.W. Dixon, “Conjugate gradient algorithms: quadratic termination without linear searches”,Journal of the Institute of Mathematics and Applications 15 (1975) 9–18.

R. Fletcher, “A Fortran subroutine for minimization by the method of conjugate gradients”, Report R-7073, A.E.R.E., Harwell (1972).

R. Fletcher and C.M. Reeves, “Function minimization by conjugate gradients”,Computer Journal 7 (1969) 149–154.

K. Kawamura and R.A. Volz, “On the rate of convergence of the conjugate gradient reset method with inaccurate linear minimizations”,IEEE Transactions on Automatic Control AC-18 (1973) 360–366.

R. Klessig and E. Polak, “Efficient implementations of the Polak-Ribiere conjugate gradient algorithm”,SIAM Journal on Control 10 (1972) 524–549.

M.L. Lenard, “Convergence conditions for restarted conjugate gradient methods with inaccurate line searches”,Mathematical Programming 10 (1976) 32–51.

G.P. McCormick and K. Ritter, “Alternate proofs of the convergence properties of the conjugate gradient method”,Journal of Optimization Theory and Applications 13 (1974) 497–518.

H. Mukai, “A scheme for determining stepsizes for unconstrained optimization methods”,IEEE Transactions on Automatic Control AC-23 (1978) 987–995.

E. Polak,Computational methods in optimization (Academic Press, London, 1971).

E. Polak and G. Ribiere, “Note sur la convergence de méthodes de directions conjugées”,Revue Francaise d'Automatique Informatique et Recherche Opérationelle 16-R1 (1969) 35–43.

B.T. Polyak, “The conjugate gradient method in extremal problems”,USSR Computational Mathematics and Mathematical Physics 9 (1969) 94–112.

M.J.D. Powell, “Restart procedures for the conjugate gradient method”,Mathematical Programming 12 (1977) 241–254.

Author information

Authors and Affiliations

Additional information

This research was supported in part by the National Science Foundation under Grant No. ENG 76-09913.

Rights and permissions

About this article

Cite this article

Mukai, H. Readily implementable conjugate gradient methods. Mathematical Programming 17, 298–319 (1979). https://doi.org/10.1007/BF01588252

Received:

Revised:

Issue Date:

DOI: https://doi.org/10.1007/BF01588252