Abstract

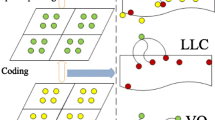

The increased use of context for high level reasoning has been popular in recent works to increase recognition accuracy. In this paper, we consider an orthogonal application of context. We explore the use of context to determine which low-level appearance cues in an image are salient or representative of an image’s contents. Existing classes of low-level saliency measures for image patches include those based on interest points, as well as supervised discriminative measures. We propose a new class of unsupervised contextual saliency measures based on co-occurrence and spatial information between image patches. For recognition, image patches are sampled using a weighted random sampling based on saliency, or using a sequential approach based on maximizing the likelihoods of the image patches. We compare the different classes of saliency measures, along with a baseline uniform measure, for the task of scene and object recognition using the bag-of-features paradigm. In our results, the contextual saliency measures achieve improved accuracies over the previous methods. Moreover, our highest accuracy is achieved using a sparse sampling of the image, unlike previous approaches who’s performance increases with the sampling density.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Lowe, D.: Distinctive image features from scale-invariant keypoints. In: IJCV (2004)

Harris, C., Stephens, M.: A combined corner and edge detector. In: AVC (1988)

Kadir, T., Brady, M.: Saliency, scale and image description. In: IJCV (2001)

Mikolajczyk, K., Schmid, C.: Scale and affine invariant interest point detectors. In: IJCV (2004)

Matas, J., Chum, O., Urban, M., Pajdla, T.: Robust wide baseline stereo from maximally stable extremal regions. In: BMVC (2002)

Schmid, C., Mohr, R., Bauckhage, C.: Evaluation of interest point detectors. In: IJCV (2000)

Nowak, E., Jurie, F., Triggs, B.: Sampling strategies for bag-of-features image classification. In: ECCV (2006)

Moosmann, F., Larlus, D., Jurie, F.: Learning saliency maps for object categorization. In: ECCV International Workshop on The Representation and Use of Prior Knowledge in Vision (2006)

Walker, K., Cootes, T., Taylor, C.: Locating salient object features. In: BMVC (1998)

Fritz, G., Seifert, C., Paletta, L., Bischof, H.: Entropy based saliency maps for object recognition. In: ECOVISION (2004)

Serre, T., Riesenhuber, M., Louie, J., Poggio, T.: On the role of object-specific features for real world object recognition in biological vision. In: BMVC (2002)

Vidal-Naquet, M., Ullman, S.: Object recognition with informative features and linear classification. In: ICCV (2003)

Leung, T., Malik, J.: Representing and recognizing the visual appearance of materials using three-dimensional textons. In: IJCV (2001)

Lazebnik, S., Schmid, C., Ponce, J.: Affine-invariant local descriptors and neighborhood statistics for texture recognition. In: ICCV (2003)

Csurka, G., Dance, C., Fan, L., Willamowski, J., Bray, C.: Visual categorization with bags of keypoints. In: ECCV workshop on Statistical Learning in Computer Vision (2004)

Winn, J., Criminisi, A., Minka, T.: Object categorization by learned universal visual dictionary. In: ICCV (2005)

Fergus, R., Fei-Fei, L., Perona, P., Zisserman, A.: Learning object categories from googles image search. In: ICCV (2005)

Sivic, J., Zisserman, A.: Video Google: A text retrieval approach to object matching in videos. In: ICCV (2003)

Sivic, J., Russell, B., Efros, A.A., Zisserman, A., Freeman, B.: Discovering objects and their location in images. In: ICCV (2005)

Jurie, F., Triggs, B.: Creating efficient codebooks for visual recognition. In: ICCV (2005)

Ye, Y., Tsotsos, J.K.: Where to look next in 3d object search. In: ISCV (1995)

Viola, P., Jones, M.: Robust real-time object detection. In: IJCV (2001)

Grauman, K., Darrell, T.: Efficient image matching with distributions of local invariant features. In: CVPR (2005)

Fei-Fei, L., Perona, P.: A bayesian hierarchical model for learning natural scene categories. In: CVPR (2005)

Agarwal, A., Triggs, B.: Hyperfeatures – multilevel local coding for visual recognition. In: ECCV (2006)

Treisman, A.M., Gelade, G.: A feature-integration theory of attention. Cognitive Psychology (1980)

Itti, L., Koch, C., Niebur, E.: A model of saliency-based visual attention for rapid scene analysis. In: PAMI (1998)

Koch, C., Ullman, S.: Shifts in selective visual attention: towards the underlying neural circuitry. Human Neurobiology (1985)

Sebe, N., Lew, M.: Comparing salient point detectors. Pattern Recognition Letters (2003)

Hall, D., Leibe, B., Schiele, B.: Saliency of interest points under scale changes. In: BNVC (2002)

Walther, D., Rutishauser, U., Koch, C., Perona, P.: On the usefulness of attention for object recognition. In: ECCV (2004)

Hoiem, D., Efros, A., Hebert, M.: Putting objects in perspective. In: CVPR (2006)

Torralba, A., Murphy, K., Freeman, W.: Contextual models for object detection using boosted random fields. In: NIPS (2005)

Torralba, A., Sinha, P.: Statistical context priming for object detection. In: ICCV (2001)

Murphy, K., Torralba, A., Freeman, W.: Using the forest to see the trees: a graphical model relating features, objects, and scenes. In: NIPS (2003)

Bose, B., Grimson, E.: Improving object classification in far-field video. In: ECCV (2004)

Torralba, A., Murphy, K., Freeman, W., Rubin, M.: Context-based vision system for place and object recognition. AI Memo (2003)

Rabinovich, A., Vedaldi, A., Galleguillos, C., Wiewiora, E., Belongie, S.: Objects in context. In: ICCV (2007)

Parikh, D., Zitnick, C.L., Chen, T.: From appearance to context-based recognition: Dense labeling in small images. In: CVPR (2008)

Singhal, A., Luo, J., Zhu, W.: Probabilistic spatial context models for scene content understanding. In: CVPR (2003)

Oliva, A., Torralba, A.: Modeling the shape of the scene: a holistic representation of the spatial envelope. In: IJCV (2001)

Torralba, A.: Outdoor scene category dataset, http://people.csail.mit.edu/torralba/code/spatialenvelope/

Shotton, J., Winn, J., Rother, C., Criminisi, A.: Textonboost: joint appearance, shape and context modeling for multi-class object recognition and segmentation. In: ECCV (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Parikh, D., Zitnick, C.L., Chen, T. (2008). Determining Patch Saliency Using Low-Level Context. In: Forsyth, D., Torr, P., Zisserman, A. (eds) Computer Vision – ECCV 2008. ECCV 2008. Lecture Notes in Computer Science, vol 5303. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-88688-4_33

Download citation

DOI: https://doi.org/10.1007/978-3-540-88688-4_33

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-88685-3

Online ISBN: 978-3-540-88688-4

eBook Packages: Computer ScienceComputer Science (R0)