Abstract

Current facial reenactment techniques are able to generate results with a high level of photo-realism and temporal consistency. Although the technical possibilities are rapidly progressing, recent techniques focus on achieving fast, visually plausible results. Further perceptual effects caused by altering the original facial expressivity of the recorded individual are disregarded. By investigating the influence of altered facial movements on the perception of expressions we aim to generate not only physically possible but truly believable reenactments.

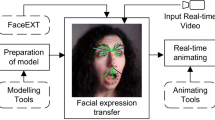

In this paper we perform two experiments using a modified state-of-the-art technique to reenact a video portrait of a person with different expressions gathered from a validated database of motion captured facial expressions to better understand the impact of reenactments.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Brainard, D.H.: The psychophysics toolbox. Spat. Vis. 10, 433–436 (1997)

Castillo, S., Legde, K., Cunningham, D.W.: The semantic space for motion-captured facial expressions. Comput. Animation Virtual Worlds 29(3–4), e1823 (2018)

Castillo, S., Wallraven, C., Cunningham, D.W.: The semantic space for facial communication. Comput. Animation Virtual Worlds 25(3–4), 223–231 (2014)

Cunningham, D.W., Kleiner, M., Wallraven, C., Bülthoff, H.H.: Manipulating video sequences to determine the components of conversational facial expressions. ACM Trans. Appl. Percept. 2(3), 251–269 (2005)

Cunningham, D.W., Wallraven, C.: The interaction between motion and form in expression recognition. In: Proceedings of the ACM Symposium on Applied Perception in Graphics and Visualization, pp. 41–44 (2009)

Dale, K., Sunkavalli, K., Johnson, M.K., Vlasic, D., Matusik, W., Pfister, H.: Video face replacement. ACM Trans. Graph. 30(6), 1–10 (2011)

Feng, Y., Wu, F., Shao, X., Wang, Y., Zhou, X.: Joint 3D face reconstruction and dense alignment with position map regression network. In: European Conference on Computer Vision (2018)

Goodfellow, I., et al.: Generative adversarial nets. In: Advances in Neural Information Processing Systems, pp. 2672–2680 (2014)

Kemelmacher-Shlizerman, I., Sankar, A., Shechtman, E., Seitz, S.M.: Being john malkovich. In: European Conference on Computer Vision, pp. 341–353 (2010)

King, D.E.: Dlib-ml: A machine learning toolkit. J. Mach. Learn. Res. 10, 1755–1758 (2009). http://www.dlib.net

Mori, M., MacDorman, K., Kageki, N.: The uncanny valley. IEEE Robot Autom. Mag. 19(2), 98–100 (2012)

Nusseck, M., Cunningham, D.W., Wallraven, C., Bülthoff, H.H.: The contribution of different facial regions to the recognition of conversational expressions. J. Vis. 8(8), 1–23 (2008)

Van den Oord, A., Kalchbrenner, N., Espeholt, L., Vinyals, O., Graves, A., Kavukcuoglu, K.: Conditional image generation with PixelCNN decoders. In: Advances in Neural Information Processing Systems, pp. 4790–4798 (2016)

Mittal, T., Bhattacharya, U., Chandra, R., et al.: Emotions don’t lie: A deepfake detection method using audio-visual affective cues (2020). arXiv:2003.06711

Thies, J., Zollhöfer, M., Nießner, M.: Deferred neural rendering: Image synthesis using neural textures. ACM Trans. Graph. 38(4), 66:1–66:12 (2019)

Thies, J., Zollhöfer, M., Stamminger, M., Theobalt, C., Nießner, M.: Face2face: real-time face capture and reenactment of RGB videos. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2387–2395 (2016)

Wang, T.C., et al.: Video-to-video synthesis. In: Advances in Neural Information Processing Systems (2018)

Weise, T., Bouaziz, S., Li, H., Pauly, M.: Realtime performance-based facial animation. ACM Trans. Graph. 30(4), 1–10 (2011)

Acknowledgements

The authors gratefully acknowledge funding by the German Science Foundation (DFG MA2555/15-1 “Immersive Digital Reality”).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Groth, C., Tauscher, JP., Castillo, S., Magnor, M. (2020). Altering the Conveyed Facial Emotion Through Automatic Reenactment of Video Portraits. In: Tian, F., et al. Computer Animation and Social Agents. CASA 2020. Communications in Computer and Information Science, vol 1300. Springer, Cham. https://doi.org/10.1007/978-3-030-63426-1_14

Download citation

DOI: https://doi.org/10.1007/978-3-030-63426-1_14

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-63425-4

Online ISBN: 978-3-030-63426-1

eBook Packages: Computer ScienceComputer Science (R0)